Clinicians and Scientists: Turn to AI, Not into AI

1Office of Research Integrity (ORI), Sun Yat-sen Memorial Hospital, Sun Yat-sen University, 510000 Guangzhou, Guangdong, P. R. China

*Correspondence to: Phei Er Saw, E-mail: caipeie@mail.sysu.edu.cn

Published Online: December 8 2025

Cite this paper:

Lin G, Cui X, Xie M et al. Clinicians and Scientists: Turn to AI, Not into AI. BIO Integration 2025; 6: 1–5.

DOI: 10.15212/bioi-2025-0188. Available at: https://bio-integration.org/

Download citation

© 2025 The Authors. This is an open access article distributed under the terms of the Creative Commons Attribution License (https://creativecommons.org/licenses/by/4.0/). See https://bio-integration.org/copyright-and-permissions/

Artificial intelligence (AI) is rapidly reshaping research across disciplines. In tasks as diverse as automating literature reviews and decoding complex datasets, AI has become central to modern inquiry. However, its rise has also prompted critical questions regarding human judgment, ethics, and dependence on machine outputs. Although AI increases efficiency, it cannot replace the creativity, intuition, and moral responsibility that underpin credible research. The challenge is therefore not to surrender human intellect to algorithms but to cultivate a partnership between them.

Current use of AI in research

AI now permeates nearly every research field, and has transformed how data are gathered, analyzed, and interpreted. In data-heavy domains, such as genomics, climate science, and finance, AI can detect correlations and patterns with unmatched precision [1, 2]. Biomedical researchers use AI to accelerate drug discovery, optimize clinical trials, and refine diagnostics [3], whereas social scientists use sentiment analysis and behavioral modeling to probe social trends [4].

AI’s ability to automate literature reviews has been another major breakthrough. As academic output rapidly increases, natural language processing tools can now summarize vast studies, pinpoint research gaps, and extract key findings. Consequently, researchers can spend less time collecting and more time interpreting information [5]. The growing presence of AI technology in higher education and research underscores this trend. Moreover, AI can assist in scientific writing by improving grammar and structure, and increasing citation accuracy; it can even streamline the publication process [6].

However, the expanding roles of AI also warrant caution. Models trained on biased data can yield misleading results, and the opaque “black box” nature of many algorithms hinders transparency and accountability [7]. As research grows increasingly dependent on AI, ensuring scientific integrity will be essential. The advantages and risks of using AI in research are summarized in Table 1 below.

Table 1 Advantages and Risks of using AI in Research

| Advantage | Meaning | Typical examples | Research impact | References |

|---|---|---|---|---|

| Efficiency gains | AI automates data cleaning, coding, and routine analytics, so researchers spend less time on manual tasks. | Batch-processing survey data; automatic transcription and coding of interviews; rapid image preprocessing | Shorter project cycles; frees time for theory-building and interpretation | [22] |

| Speed at scale | AI processes massive datasets rapidly, thereby enabling near-real-time analysis that would be infeasible manually. | Running millions of simulation iterations; scanning thousands of articles for inclusion/exclusion | Faster iteration loops; timely insights for dynamic phenomena (e.g., outbreaks or markets) | [23] |

| Increased accuracy | Statistically robust models decrease arithmetic and transcription errors and achieve consistent application of rules. | Programmatic error checking; probabilistic modeling with uncertainty quantification | Decreased avoidable errors; more precise effect estimates and forecasts | [23] |

| Cost reduction | Automation decreases reliance on large RA teams for repetitive tasks and reduces compute/analysis hours via optimized pipelines. | Auto-screening of literature; scripted figure/table generation | Lower budgets per study; ability of small teams to execute large projects | [24] |

| Enabling innovation | AI enables new methods (representation learning, generative simulation) and enables exploration of previously intractable spaces. | Modeling high-dimensional omics data; discovering novel materials via generative design | Ability to answer new questions; advanced methodological frontiers | [25] |

| Risk | Meaning | Concrete failure modes | Consequences for research | References |

| Automation bias | Researchers defer to model outputs without adequate skepticism or triangulation. | Accepting a classification threshold as “ground truth” without sensitivity checks | Overconfident conclusions; under-reported uncertainty | [26] |

| Data bias and flawed training | Biased, low-quality, or shift-mismatched data propagate errors in outputs. | Skewed medical datasets leading to unequal performance across subgroups | Systematic bias; compromised external validity and credibility | [27, 28] |

| Limited creativity and critical thinking | Models generate plausible text/code but do not originate conceptual leaps or hypotheses. | Overreliance on AI-drafted hypotheses that lack novelty or causal logic | Stagnation in idea generation; weak explanatory frameworks | [10] |

| Privacy and data protection | Tools require large, sometimes sensitive datasets; handling and consent are unclear. | Uploading of identifiable patient records to third-party services | Legal/ethical exposure; loss of participant trust; IRB violations | [29] |

| Plagiarism/fabrication risks | Generative tools can reproduce text or fabricate citations, data, or methods. | Hallucinated references; synthetic “results” framed as empirical | Research-integrity breaches; retractions and reputational harm | [30] |

Smartly applying AI to enhance research performance

To maximize AI’s benefits and limit its risks, researchers must use it strategically, as an assistant rather than a substitute for human expertise. Critical interpretation and ethical judgment remain essential, together with active oversight of AI-generated outputs, if necessary. High-quality, unbiased data are crucial, because flawed inputs can lead to unreliable results [8]. Transparency should also be standard practice: to ensure reproducibility and accountability, researchers must document use of AI in analysis, hypothesis building, and interpretation [9]. Equipping researchers with AI literacy is equally important. Institutions should provide training on AI’s methods, limits, and ethical use. Establishing AI ethics committees could safeguard responsible integration and uphold research integrity [10].

Human–AI collaboration: a sociotechnical perspective

Beyond technical and ethical debates, human–AI collaboration in research is best viewed through a sociotechnical lens. Science has always evolved with its tools, and AI is simply the latest stage in this co-development. Rather than being an independent actor, AI functions within an interdependent system in which humans provide meaning and creativity, and machines deliver computation and scale. This framework reflects the idea of “extended cognition,” in which technology enhances but does not replace human intellect. Genuine innovation emerges when researchers critically interpret AI outputs within ethical and disciplinary contexts. Ultimately, research integrity will hinge not only on technical oversight but also on understanding how humans and intelligent systems can co-create knowledge responsibly.

Case studies: International Academic Controversies on AI-Generated Articles

AI Misuse in UK Higher Education

The rapid use of AI tools among UK students has sparked major academic integrity concerns. Surveys suggest that nearly 90% of undergraduates use AI technologies such as ChatGPT for assignments, research, or writing support [11]. Although such tools can facilitate learning, their unchecked use has led to increasing cases of academic misconduct [10]. A key issue is the absence of clear, unified institutional guidelines. Because most UK universities still lack consistent AI policies, students remain uncertain about acceptable AI use. Existing rules vary across institutions, faculties, and even individual professors, thus leading to confusion [12].

This inconsistency also exposes a double standard: whereas students are penalized for AI-generated work, many faculty members use AI for research, communication, and grading [13]. The current debate underscores the need for transparent, standardized AI policies that balance innovation with fairness and uphold academic integrity [14].

Controversies regarding AI-generated academic papers

AI’s growing role in academic publishing has triggered global ethical debates. Leading journals such as Science now require authors to disclose any AI use in data analysis, text editing, or literature review, and prohibit use of AI to replace core intellectual contributions such as hypothesis formation or interpretation. However, authors’ compliance with AI policies remains uneven, and some researchers misuse AI or fail to disclose its involvement. A notable case at a Chinese “211 Project” university involved an SCI-indexed paper with phrasing and structure resembling ChatGPT outputs, thus prompting doubts regarding authorship integrity. The incident reignited concerns that AI-generated writing can blur authorship boundaries, mislead readers, and compromise scientific rigor. In response, universities and publishers are tightening regulations [15]. Institutions now mandate AI-use disclosures, and leading journals are testing AI-detection tools to identify undisclosed machine-generated content [16].

Human vs. AI: when are we the winner?

Despite AI’s rapid progress in several essential domains, AI cannot surpass human intellect. Although AI can analyze data, detect patterns, and generate text, it lacks the reasoning, ethical discernment, and creativity inherent in high-quality research. The limits of AI are clearest in hypothesis generation and theoretical innovation. Although AI can identify correlations, it lacks the curiosity and paradigm-challenging insights that can drive breakthroughs. Ethical and moral decision-making also must remain uniquely human. Research in bioethics, law, and social policy requires empathy and moral reasoning beyond the capabilities of programmed frameworks. Similarly, qualitative and interpretive disciplines, such as anthropology, history, and literature, depend on context, symbolism, and emotion that AI cannot authentically grasp.

Human researchers also excel in interdisciplinary synthesis and contextual awareness, and can connect ideas across fields in ways in which AI cannot intuitively replicate [17]. True creativity rooted in imagination, originality, and lived experience remains beyond algorithmic reach [18] (Box 1). In emotional and psychological research, empathy and human connection are indispensable. AI can detect sentiment but cannot comprehend genuine emotion or consciousness [19, 20]. Field research and community engagement, which rely on trust and adaptability, similarly require human presence [21]. Ultimately, AI can serve as a powerful collaborator but not a human replacement. Scientific progress depends on balancing AI’s computational strength with human creativity, ethics, and insight. When used as a partner but not a proxy, AI can enhance research while preserving what makes discovery profoundly human [17].

Box 1 Limits of AI in Creating Accurate Scientific Figures

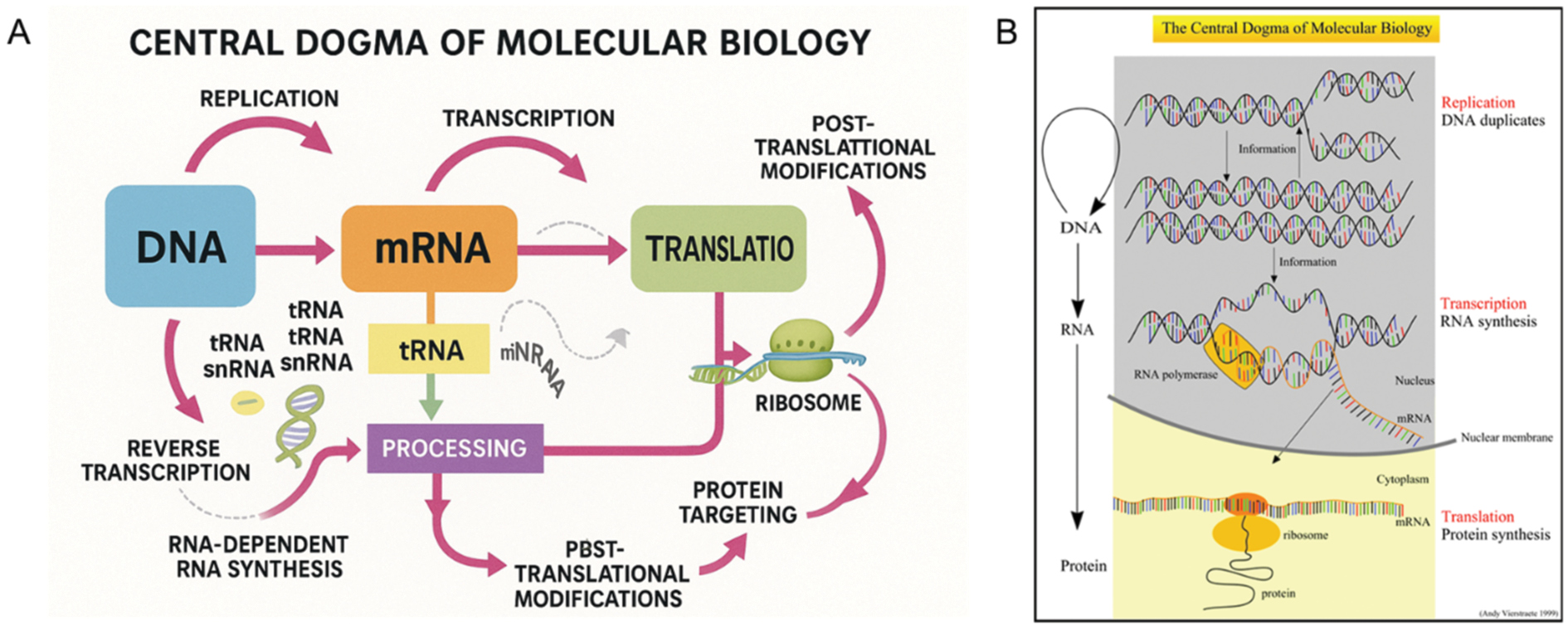

AI shows impressive potential across research domains, yet its limitations are clear when it is tasked with producing scientifically accurate figures. Although AI-generated images appear visually appealing, they often fail to meet the precision and clarity necessary for scientific communication. For example, when asked to illustrate the central dogma of biology, an AI model produced a figure (Figure 1A) that omitted essential components, misrepresented molecular structures, and used incorrect or nonsensical labels. Such errors compromise scientific validity and render figures unsuitable for education or research use. In contrast, a manually created diagram (Figure 1B) correctly represents transcription and translation, uses accurate terminology, and adheres to accepted conventions, thus ensuring clarity and reliability.

Figure 1 Stark contrast between an AI-generated scientific figure and a correct, manually drawn figure. Figure (A) was generated by ChatGPT5.1. Figure (B) is courtesy of Andy Vierstraete, University of Ghent. These discrepancies highlight AI’s struggle in executing tasks requiring deep conceptual understanding and domain expertise.

Although AI excels at automating analysis and literature synthesis, generating accurate scientific visuals still requires human oversight. The best approach is a hybrid framework using AI for preliminary drafts and humans for verification and refinement. With continued advances in training data and human-in-the-loop design, AI may achieve greater accuracy in the future; however, maintaining expert supervision currently remains essential.

A note of caution

As AI becomes integral to research, maintaining ethical and responsible practices is paramount. The Office of Research Integrity warns against plagiarism and data fabrication, and these risks are amplified by AI-generated text and results. All AI outputs must be verified for originality and accuracy against established literature and methods. Transparency is equally essential. Researchers must clearly disclose how AI was used within methods sections to preserve credibility and compliance with integrity policies. Data privacy and security also require vigilance: because many AI tools rely on sensitive datasets, strict adherence to data protection laws and institutional governance protocols is necessary. Finally, the potential misuse of AI to manipulate findings, e.g., through fabricated data, deepfakes, or misleading conclusions, poses serious threats to scientific trust. Institutions must use robust detection systems and enforce human oversight to prevent unethical practices. As AI continues to evolve, responsible integration will remain critical to upholding research integrity and protecting the credibility of science itself.

Future outlook

The integration of AI into healthcare and academia has immense promise but also major challenges linked to transparency, security, and integrity. In healthcare, a major limitation is the lack of explainability in AI models. Diagnostic algorithms often output results without revealing their reasoning, thus making their reliability difficult for clinicians to assess, particularly in complex or rare cases. For example, deep learning models tend to misdiagnose rare diseases as a result of limited training data. This lack of transparency can erode trust between medical professionals and AI systems. Data privacy further compounds the risks, because models trained on sensitive patient data might inadvertently expose information or reinforce biases that result in care inequity across races or genders.

In academia, AI-generated content has prompted concerns regarding plagiarism, data fabrication, and authorship disputes. Tools may replicate existing research or fabricate findings, thus blurring the boundaries defining originality and ownership. The growing presence of unverified, AI-produced papers threatens scholarly credibility. To address these risks, institutions must establish clear guidelines governing AI use, authorship, and data ethics. Only through transparent policies and responsible oversight can AI’s transformative potential be realized without compromising trust or academic integrity.

Conclusion

AI is undeniably a valuable tool with potential to enhance research across disciplines. However, the key to its successful integration will be maintaining a balanced approach leveraging AI for efficiency while preserving human critical thinking and ethical judgment. Researchers must use AI only as an aid, without allowing AI to take control of the scientific process. By promoting transparency, upholding ethical standards, and ensuring rigorous validation of AI-assisted research, the research community could harness the power of AI without compromising the integrity of scientific inquiry. The future of research depends on using AI wisely, but not blindly surrendering to it. Researchers, do not lose your unique creativity!

Data availability statement

Not applicable.

Ethics statement

No direct interactions with human or animal subjects were involved. Therefore, ethical approval and informed consent were not required.

Funding

This work is supported by the National Natural Science Foundation of China (J2024019) and the Guangdong Natural Science Foundation (No. 2021A1515012008). This work is also partially supported by Guangzhou Municipal Bureau grant (202201020576 and 2024A03J1052).

Conflicts of interest

Phei Er Saw is an editorial board member of BIO Integration. She was not involved in the peer review or handling of the manuscript. The other authors have no other competing interests to disclose.

References

- Philip Chen CL, Zhang C-Y. Data-intensive applications, challenges, techniques and technologies: a survey on Big Data. Inf Sci 2014;275:314-47. [DOI: 10.1016/j.ins.2014.01.015]

- Li X, Guo Y. Paradigm shifts from data-intensive science to robot scientists. Sci Bull 2025;70(1):14-8. [PMID: 39389869 DOI: 10.1016/j.scib.2024.09.029]

- Ocana A, Pandiella A, Privat C, Bravo I, Luengo-Oroz M, et al. Integrating artificial intelligence in drug discovery and early drug development: a transformative approach. Biomark Res 2025;13(1):42. [PMID: 40087789 DOI: 10.1186/s40364-025-00758-2]

- Xu R, Sun Y, Ren M, Guo S, Pan R, et al. AI for social science and social science of AI: a survey. Inf Process Manag 2024;61(3):103665. [DOI: 10.1016/j.ipm.2024.103665]

- Alhur AA, Khlaif ZN, Hamamra B, Hussein E. Paradox of AI in higher education: qualitative inquiry into AI dependency among educators in Palestine. JMIR Med Educ 2025;11:e74947. [PMID: 40953310 DOI: 10.2196/74947]

- Lin Z. Techniques for supercharging academic writing with generative AI. Nat Biomed Eng 2024;9(4):426-31. [PMID: 38499642 DOI: 10.1038/s41551-024-01185-8]

- von Eschenbach WJ. Transparency and the black box problem: why we do not trust AI. Philos Technol 2021;34(4):1607-22. [DOI: 10.1007/s13347-021-00477-0]

- Merhi MI. An evaluation of the critical success factors impacting artificial intelligence implementation. Int J Inf Manag 2023;69:102545. [DOI: 10.1016/j.ijinfomgt.2022.102545]

- Balasubramaniam N, Kauppinen M, Rannisto A, Hiekkanen K, Kujala S. Transparency and explainability of AI systems: from ethical guidelines to requirements. Inf Software Technol 2023;159:107197. [DOI: 10.1016/j.infsof.2023.107197]

- Nguyen KV. The use of generative AI tools in higher education: ethical and pedagogical principles. J Acad Ethics 2025;23(3):1435-55. [DOI: 10.1007/s10805-025-09607-1]

- Stöhr C, Ou AW, Malmström H. Perceptions and usage of AI chatbots among students in higher education across genders, academic levels and fields of study. Comput Educ Artif Intell 2024;7:100259. [DOI: 10.1016/j.caeai.2024.100259]

- Balalle H, Pannilage S. Reassessing academic integrity in the age of AI: a systematic literature review on AI and academic integrity. Soc Sci Humanit Open 2025;11:101299. [DOI: 10.1016/j.ssaho.2025.101299]

- Dabis A, Csáki C. AI and ethics: investigating the first policy responses of higher education institutions to the challenge of generative AI. Humanit Soc Sci Commun 2024;11(1):1006. [DOI: 10.1057/s41599-024-03526-z]

- Funa AA, Gabay RAE. Policy guidelines and recommendations on AI use in teaching and learning: a meta-synthesis study. Soc Sci Humanit Open 2025;11:101221.

- Lin Z. Towards an AI policy framework in scholarly publishing. Trends Cogn Sci 2024;28(2):85-8. [PMID: 38195365 DOI: 10.1016/j.tics.2023.12.002]

- Moorhouse BL, Yeo MA, Wan Y. Generative AI tools and assessment: guidelines of the world’s top-ranking universities. Comput Educ Open 2023;5:100151. [DOI: 10.1016/j.caeo.2023.100151]

- Xu Y, Liu X, Cao X, Huang C, Liu E, et al. Artificial intelligence: a powerful paradigm for scientific research. Innovation 2021;2(4):100179. [DOI: 10.1016/j.xinn.2021.100179]

- Lockhart ENS. Creativity in the age of AI: the human condition and the limits of machine generation. J Cult Cogn Sci 2024;9(1):83-8. [DOI: 10.1007/s41809-024-00158-2]

- Velagaleti S, Choukaier D, Nuthakki R, Lamba V, Sharma V, et al. Empathetic algorithms: the role of AI in understanding and enhancing human emotional intelligence. J Electr Syst 2024;20(3s):2051-60. [DOI: 10.52783/jes.1806]

- Runco MA. AI can only produce artificial creativity. J Creativity 2023;33(3):100063. [DOI: 10.1016/j.yjoc.2023.100063]

- Jiang T, Sun Z, Fu S, Lv Y. Human-AI interaction research agenda: a user-centered perspective. Data Inf Manag 2024;8(4):100078. [DOI: 10.1016/j.dim.2024.100078]

- Zong Z, Guan Y. AI-driven intelligent data analytics and predictive analysis in industry 4.0: transforming knowledge, innovation, and efficiency. J Knowl Econ 2024;16(1):864-903. [DOI: 10.1007/s13132-024-02001-z]

- Bzdok D, Nichols TE, Smith SM. Towards algorithmic analytics for large-scale datasets. Nat Mach Intell 2019;1(7):296-306. [DOI: 10.1038/s42256-019-0069-5]

- Akinnagbe OB. Human-AI collaboration: enhancing productivity and decision-making. Int J Educ Manag Technol 2024;2(3):387-417. [DOI: 10.58578/ijemt.v2i3.4209]

- Ting CK. Driving AI innovation through computational intelligence [Editor’s remarks]. IEEE Comput Intell Mag 2025;20(1):2-3. [DOI: 10.1109/MCI.2024.3504892]

- Zhai C, Wibowo S, Li LD. The effects of over-reliance on AI dialogue systems on students’ cognitive abilities: a systematic review. Smart Learn Environ 2024;11(1):28. [DOI: 10.1186/s40561-024-00316-7]

- Hanna MG, Pantanowitz L, Jackson B, Palmer O, Visweswaran S, Pantanowitz J, et al. Ethical and bias considerations in artificial intelligence/machine learning. Mod Pathol 2025;38(3):100686. [PMID: 39694331 DOI: 10.1016/j.modpat.2024.100686]

- Wei X, Kumar N, Zhang H. Addressing bias in generative AI: challenges and research opportunities in information management. Inf Manag 2025;62(2):104103. [DOI: 10.1016/j.im.2025.104103]

- Paul J. Privacy and data security concerns in AI. 2024.

- Elali FR, Rachid LN. AI-generated research paper fabrication and plagiarism in the scientific community. Patterns 2023;4(3):100706. [DOI: 10.1016/j.patter.2023.100706]