Artificial Intelligence in Pediatric Ultrasound: An Update and Future Applications

1Key Laboratory of Medical Imaging Precision Theranostics and Radiation Protection, College of Hunan Province, The Affiliated Changsha Central Hospital, Hengyang Medical School, University of South China, Changsha, China

2Institute of Medical Imaging, Hengyang Medical School, University of South China, Hengyang, China

3The Seventh Affiliated Hospital, Hunan Veterans Administration Hospital, Hengyang Medical School, University of South China, Changsha, Hunan, China

4West China Biomedical Big Data Center, West China Hospital, Sichuan University, Chengdu, China

5Department of Radiology, Zhujiang Hospital of Southern Medical University, Guangzhou, China

6College of Computer and Information Science, Chongqing Normal University Chongqing, Chongqing, China

7National Center for Applied Mathematics in Chongqing, Chongqing Normal University, Chongqing, China

8Department of Orthopedics, The First Affiliated Hospital of Bengbu Medical University, Laboratory of Tissue and Transplant in Anhui Province, Bengbu Medical University, Bengbu, China

9Research Centre for Intelligent Healthcare, Coventry University, Richard Crossman Building, Coventry CV1 5RW, UK

10Department of Orthopedics, Union Hospital, Tongji Medical College, Huazhong University of Science and Technology, Wuhan, China

*Correspondence to: Haipeng Liu, Centre for Intelligent Healthcare, Coventry University, Richard Crossman Building, Jordan Well, Coventry CV1 5RW, UK, E-mail: haipeng.liu@coventry.ac.uk; Zhewei Ye, Orthopaedic Surgery Union Hospital, Tongji Medical College, Huazhong University of Science and Technology, 1277 Jiefang Avenue, Wuhan 430022, China, E-mail: yezhewei@hust.edu.cn

Received: July 16 2025; Revised: October 3 2025; Accepted: October 13 2025; Published Online: November 20 2025.

Cite this paper:

Kuang C, Jiang Z, Wang Y et al. Artificial Intelligence in Pediatric Ultrasound: An Update and Future Applications. BIO Integration 2025; 1–21.

DOI: 10.15212/bioi-2025-0130. Available at: https://bio-integration.org/

Download citation

© 2025 The Authors. This is an open access article distributed under the terms of the Creative Commons Attribution License (https://creativecommons.org/licenses/by/4.0/). See https://bio-integration.org/copyright-and-permissions/

Abstract

The emerging application of artificial intelligence (AI) in pediatric ultrasound has shown significant potential to improve diagnostic accuracy and efficiency, particularly in addressing the challenges of conventional ultrasound in operator dependence, inconsistent image quality, and limited quantitative analysis capabilities. These limitations arise from the inherent complexity of pediatric ultrasound image interpretation, such as organ immaturity, motion artifacts, and intestinal gas interference. AI can enhance structural recognition, offering automated, standardized measurements. AI applications can also assist non-expert physicians in enhancing diagnostic accuracy. This review summarizes recent advances in AI applications for pediatric ultrasound across different systems, including preliminary diagnosis, screening, detailed analysis, and decision support, while providing a detailed discussion of technical advances, unmet challenges, and future directions. Future research can focus on intelligent cross-system feature analysis frameworks, translational application of AI-driven pediatric ultrasound in multi-disease diagnosis, and fine-tuned models for personalized treatment based on large-scale randomized controlled trials. This review provides an up-to-date reference for clinicians, ultrasound technicians, researchers, and biomedical engineers.

Keywords

Artificial intelligence, decision support, diagnosis, pediatric, ultrasound.

Introduction

Ultrasound (US) has widespread applications in the diagnosis and monitoring of different systems in pediatric diseases. US provides detailed real-time dynamic imaging of the lesion area in children and has a critical role in identifying disease etiologies, assessing disease progression, and guiding clinical decision-making [1]. Currently, both two-dimensional ultrasound (2DUS) and three-dimensional ultrasound (3DUS) are extensively used to diagnose pediatric gastrointestinal intussusception, evaluate the severity of hydronephrosis in the urinary system, and predict periventricular leukomalacia (PVL) in the neurologic system at an early stage [2, 3]. Acquiring high-quality pediatric US images is crucial for accurate diagnosis and treatment. However, challenges arise during examinations due to low patient cooperation; specifically, image quality is often compromised by motion artifacts resulting from crying and agitation. Furthermore, immature pediatric organs lead to disproportionate organ-to-body ratios and diminished tissue density gradients, causing acoustic interface artifacts that obscure regions of interest [4, 5]. These factors can complicate examinations and lead to diagnostic errors. The subjectivity of measurements and observer variability further impact the acquisition of standardized images, quantitative assessments, and precise diagnoses [3].

Over the past decade artificial intelligence (AI)-based automated measurement and assessment systems have been introduced to reduce intra- and inter-observer variability and improve diagnostic accuracy [6, 7]. AI applications aimed at improving pediatric US accuracy include three primary features: structural recognition; automated standardized measurements; and diagnostic classification. Given the time-consuming nature of pediatric US examinations, AI can also shorten procedural duration and enhance workflow efficiency [8]. Although numerous AI-assisted technologies and commercial software have been developed to provide high-resolution imaging and accurate measurements in pediatric US, much of the related research remains in the early stages. In addition to the complexity of ever-changing model properties, there are issues regarding the reliability of the model, especially the decision-making process. In addition, current research has been mainly limited to the independent analysis of single diseases or systems and the feature integration across organs/systems has not been achieved for pediatric US with a gap towards accurate diagnosis and personalized treatment. In addition, despite the rapid technical advances, there is a lack of comprehensive summary of the state of the art from a clinical perspective.

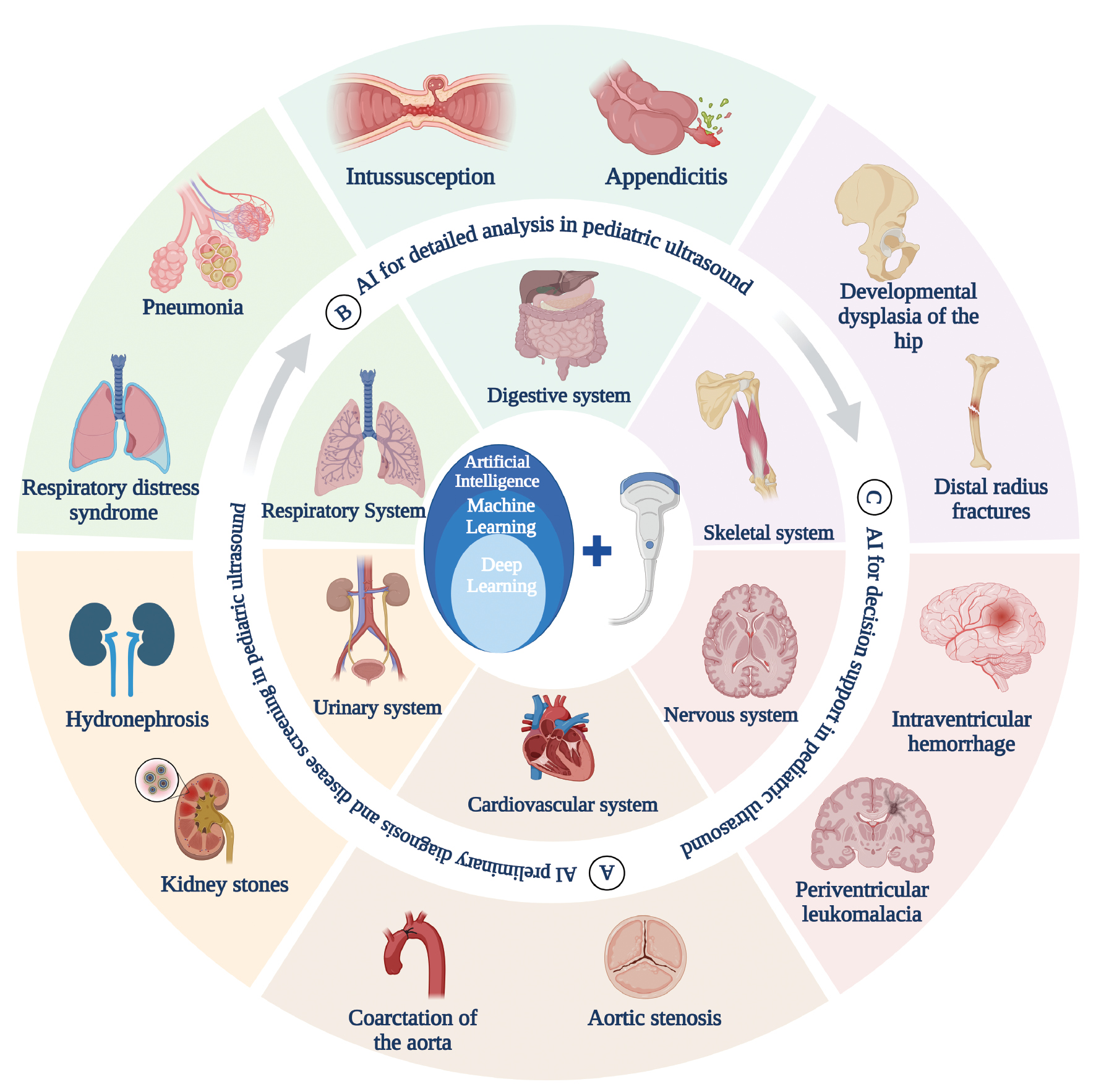

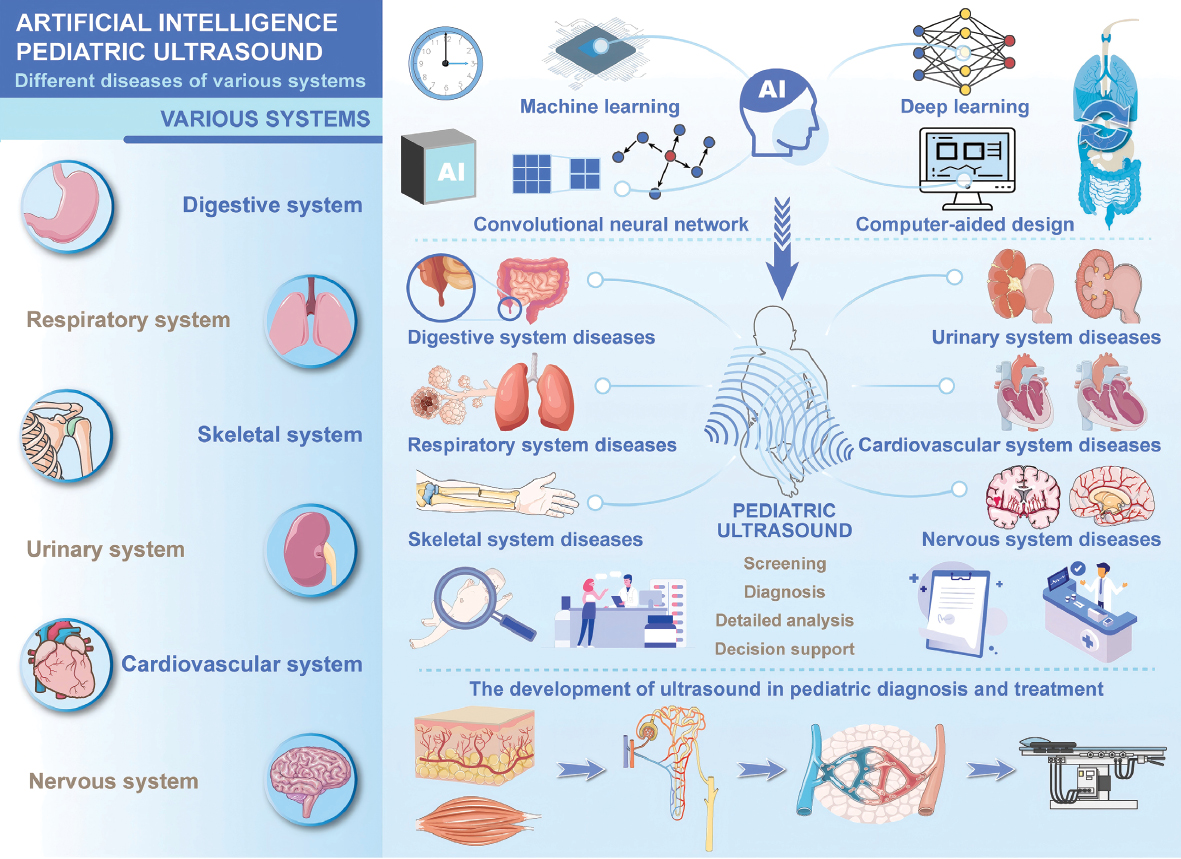

To address the research gaps, this review will provide a concise overview of the cutting-edge AI technologies applied in pediatric US. Applications in image processing, data-driven diagnosis, and decision support are summarized, highlighting the potential in upgrading AI-enhanced diagnostic tools from single-disease to multi-system diagnosis (Figure 1). Current challenges in different dimensions (e.g., data, algorithm, and ethics) are discussed to derive potential future directions.

Figure 1 Application of artificial intelligence (AI) to pediatric ultrasound (created with biorender.com). AI can be applied to pediatric ultrasound for preliminary diagnosis and screening, detailed analysis, and decision support for different systemic diseases in children.

Applications of US in pediatrics

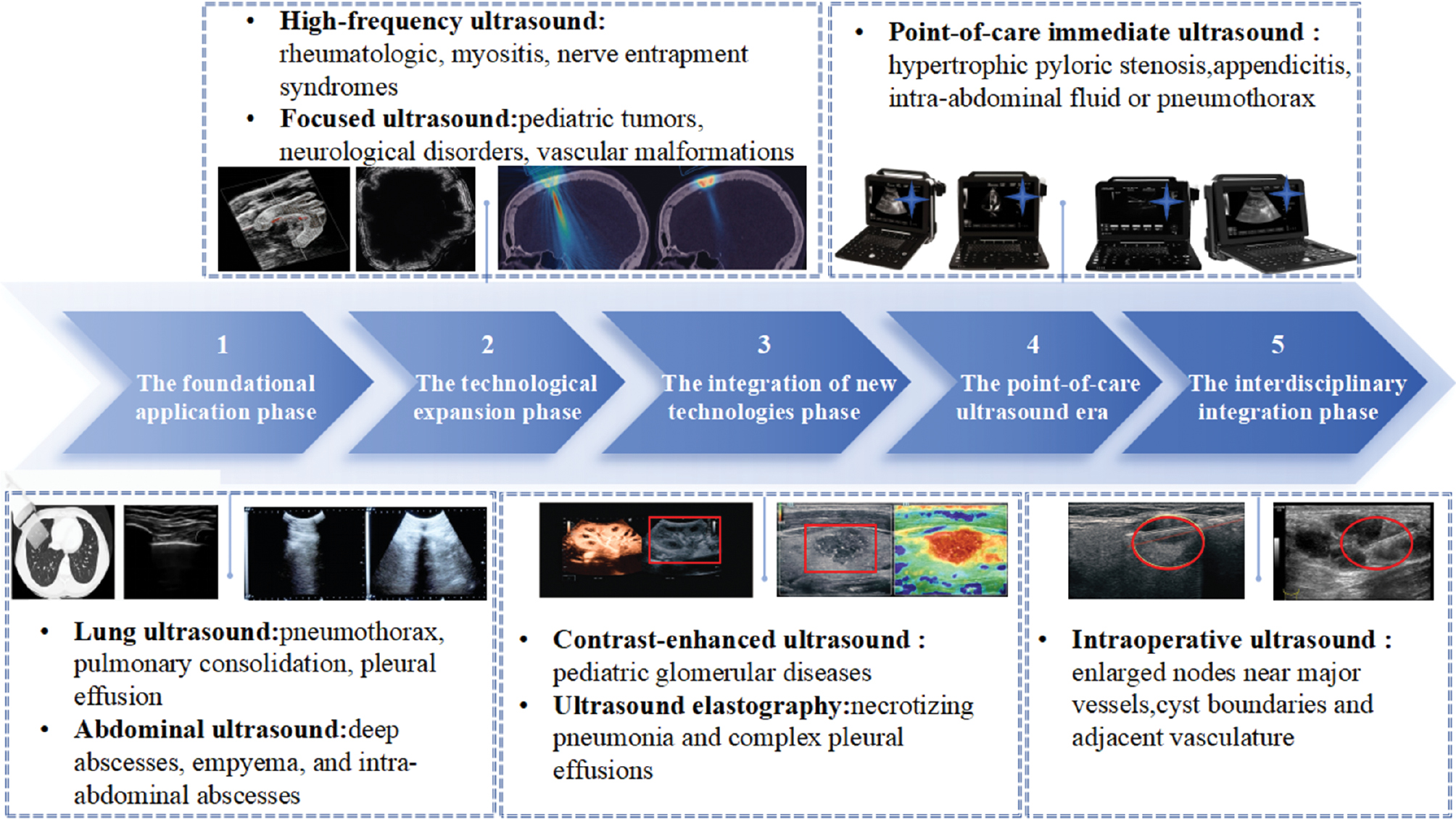

The development of US in pediatric diagnosis and treatment has undergone five distinct phases: foundational application; technological expansion; integration of new technologies; point-of-care ultrasound (POCUS); and interdisciplinary integration (Figure 2). During the foundational application phase, US gained prominence as a key diagnostic tool for respiratory, infectious, and acute abdominal conditions, owing to advantages, such as being radiation-free and providing real-time dynamic imaging. For example, lung ultrasound (LUS) enables the rapid diagnosis of pneumothoraces, pulmonary consolidation, and pleural effusions, while the LUS scoring system is used for dynamic monitoring of lung resuscitation efficacy in neonatal respiratory distress syndrome [9, 10]. US accurately assesses the extent of deep abscesses, empyema, and intra-abdominal abscesses in infectious disease diagnostics, guiding treatment decisions and optimizing therapeutic durations [10]. The application of US improves diagnostic efficiency and treatment and guarantees the safety of children.

Figure 2 The application of ultrasound in pediatrics.

AI has significantly enhanced the diagnostic and therapeutic capabilities of US, with application expanded in different fields. The introduction of high-frequency US has enabled ultra-high-resolution imaging in musculoskeletal disorders, allowing precise evaluation of superficial structures, such as the skin, nails, muscles, and peripheral nerves. This advance aids the early diagnosis of pediatric conditions, such as rheumatologic diseases, myositis, and nerve entrapment syndromes. Focused ultrasound (FUS) has undergone significant breakthroughs as a non-invasive therapeutic modality. FUS expands treatment options for pediatric tumors, neurologic disorders, and vascular malformations through mechanisms, such as thermal ablation, blood-brain barrier disruption, and neuromodulation. The combination of high-frequency imaging and FUS reduces the trauma and radiation risks associated with traditional surgery and radiotherapy, thereby advancing precision medicine [11, 12].

Technological integration innovations, such as contrast-enhanced ultrasound (CEUS) and elastography, have demonstrated significant clinical value. Supersonic shear wave imaging (SSI) enables quantification of renal cortical Young’s modulus (YM) in elastography, achieving a sensitivity of 87.3% and specificity of 86.7% in diagnosing pediatric glomerular diseases [13]. In contrast, CEUS is widely used for multi-organ assessment. For example, CEUS accurately diagnoses necrotizing pneumonia and complex pleural effusions by differentiating consolidation from fluid collections via intravenous or intracavitary contrast administration, guiding drainage and fibrinolytic therapy. CEUS enhances visualization of endocardial borders post-congenital heart surgery, improves ventricular function and perfusion assessment, and is now FDA-approved for pediatric cardiac imaging. These radiation-free, non-invasive technologies complement conventional imaging with real-time dynamic capabilities [14, 15].

POCUS enables more comprehensive healthcare monitoring and evaluation in pediatrics. The integration of LUS for pulmonary edema assessment and cardiac US for evaluating ventricular function and inferior vena cava dynamics, POCUS optimizes hemodynamic monitoring and fluid management in critically ill children [16]. Pediatric surgical applications include the rapid diagnosis of hypertrophic pyloric stenosis through muscle thickness and channel length measurement, appendicitis screening to reduce CT radiation exposure, focused assessment with sonography for trauma (FAST) exams for assessing intra-abdominal fluid or pneumothoraces, US-guided central venous catheter placement to improve success rates and reduce complications, and localization of pleural effusions or soft tissue infections [17]. Intraoperative ultrasound (IOUS) enhances the precision of minimally invasive pediatric surgery. FUS-guided inguinal hernia repair in female children during laparoscopic and robotic procedures achieves precise localization of hernia sacs and vascular structures with laparoscopic validation of repair efficacy [18]. IOUS aids in identifying enlarged nodes near major vessels during laparoscopic lymph node dissection and delineates cyst boundaries and adjacent vasculature for safe drainage in robotic splenic cyst fenestration [19].

Despite the expanding applications, the clinical adoption of AI-enhanced US in multidisciplinary integration of pediatrics faces several challenges. First, the quality of US images and diagnostic accuracy are heavily reliant on operator experience, particularly when evaluating complex anatomic structures. Novice clinicians are prone to misinterpretation due to incorrect plane selection [20]. Second, issues related to image noise and motion artifacts are significant, especially in children in whom smaller body size and limited cooperation lead to motion artifacts and interference from bone and gas, thereby compromising image quality and impeding the detection of subtle pathologies [21]. Furthermore, traditional US equipment is often large, costly, and difficult to deploy in primary care or home monitoring settings [22]. Third, a lack of standardization persists with substantial variation in the parameters across different US systems and no unified standards for pediatric image annotation. To overcome these challenges, there is a pressing need to develop objective and accurate standardization methodologies to drive future advances in AI-enhanced US.

Basics and trends of AI in medical image processing

AI is a broad concept encompassing various fields, including machine learning (ML) and deep learning (DL). The fundamental idea underlying AI was first introduced by John McCarthy in 1956, defining AI as “the science and engineering of creating intelligent machines.” ML is an essential subfield of AI, while DL represents a more advanced and efficient technique within ML. ML can typically be divided into three types: supervised learning; unsupervised learning; and reinforcement learning. Supervised learning involves training on labeled data and is widely used in tasks, such as image interpretation. In contrast, unsupervised learning does not rely on labeled data and is commonly applied in data clustering and trend analysis. Reinforcement learning uses rewards or penalties to guide the AI system to maximize decision-making performance within a specific environment [23].

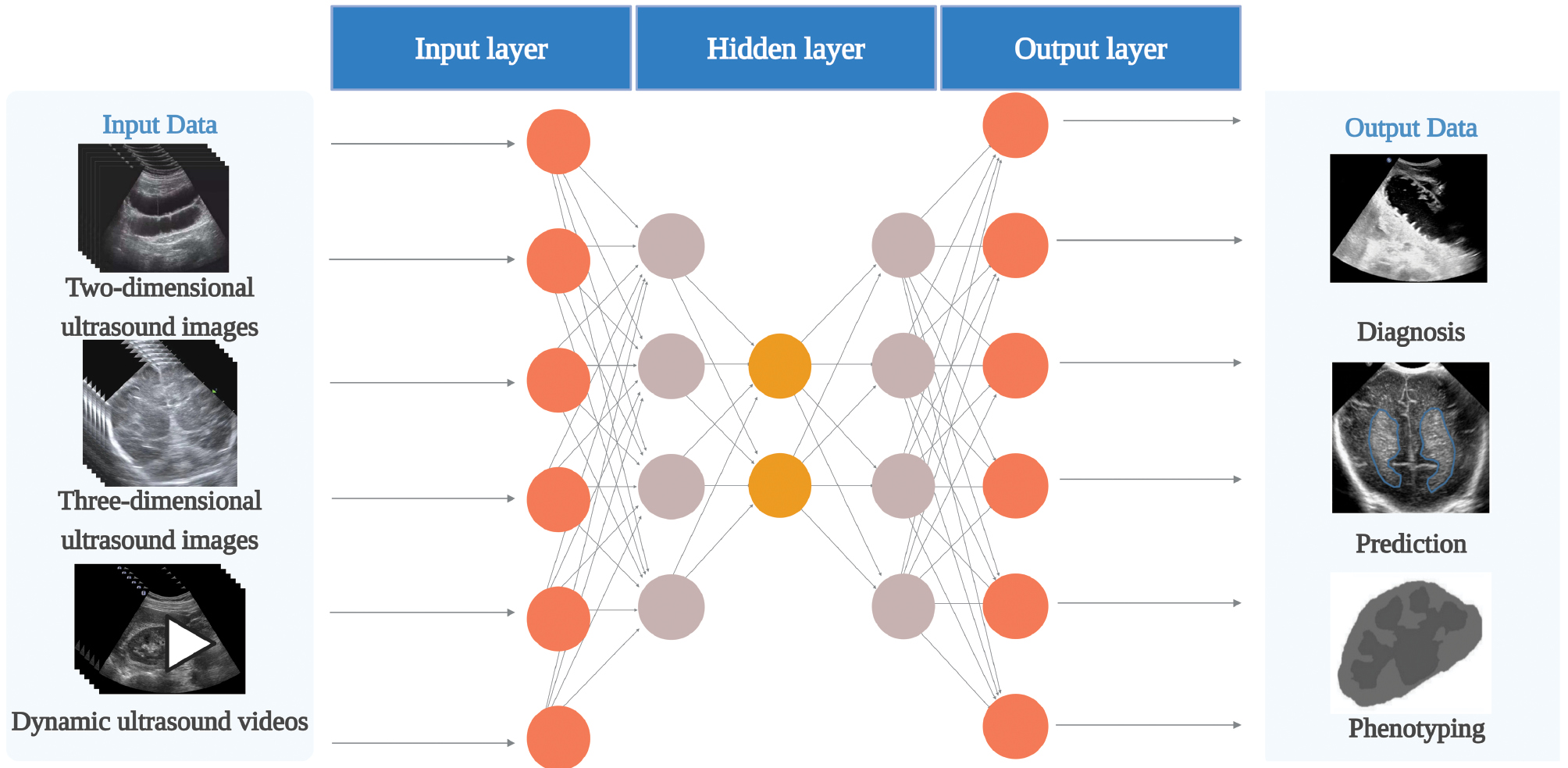

DL has become an emerging trend in medical image processing and multimodal data analysis. Neural networks, which serve as the foundation of DL, are computational models that mimic the neural circuits of the human brain [24]. The basic structure of a neural network typically consists of three layers: an input layer for data input; a hidden or intermediate layer for processing the weights flowing from the input layer; and an output layer for outputting the result (Figure 3). DL can handle more complex data by increasing the number of hidden layers in a neural network, thereby improving the predictive accuracy of the model [25]. This approach not only overcomes the reliance on pre-defined features in traditional ML but also enables machines to learn and autonomously extract features from the data. This advance has shifted US image analysis from qualitative, experience-based evaluations to precise, data-driven analyses.

Figure 3 Neural network architecture (created with biorender.com). Each neuron is a computational unit. A neural network comprises one input layer, multiple hidden layers (one hidden layer in the figure), and one output layer. The input data in pediatric ultrasound artificial intelligence includes two-dimensional ultrasound images, three-dimensional ultrasound images, and dynamic ultrasound videos. The output data includes diagnosis, prediction, and phenotype.

Convolutional neural networks (CNNs) are widely used in medical image analysis as a type of deep neural network. CNNs process pixel values and transform complex visual patterns into simpler, smaller patterns, which is the standard method for image recognition [26]. CNNs are particularly effective in regional feature extraction with a mechanism that corresponds to how the animal visual cortex responds to important visual stimuli. In addition to static image analysis, DL technologies have increasingly been applied to dynamic image analysis, offering greater potential for real-time US image processing and diagnosis. In recent years, novel DL methods, such as generative adversarial networks (GANs) and transfer learning have shown high effectiveness in medical image processing [27, 28].

It is worth noting that in recent years, large language models (LLMs) have demonstrated unprecedented capabilities in the field of natural language processing. Indeed, the application of LLMs in medicine has garnered increasing attention. In conjunction with image analysis methods, LLMs can efficiently parse clinical US reports, automatically generate reports, extract key information, and correct potential errors [29]. This capability not only improves text structuring and information standardization but also facilitates seamless integration between US imaging results and clinical documentation, thereby optimizing clinical workflows. LLM-based automated text processing can reduce human errors in report writing, shorten the time needed to generate diagnostic reports, and enhance the integration and analysis of multimodal data, especially in pediatric settings [30].

AI has undergone rapid development in pediatric ultrasound with the rapid growth in image data and advances in computational power. AI-assisted ultrasound diagnosis allows for more precise evaluation of disease types, such as distinguishing between benign and malignant conditions. AI can assess the effectiveness of threshold-independent decision-making by utilizing the area under the receiver operating characteristic curve (ROC). Furthermore, performance metrics, such as sensitivity, specificity, and accuracy, are widely used to evaluate AI models; these metrics depend on specific threshold settings [31]. Therefore, the future development of AI in medical image processing will not only focus on image feature extraction through DL but will also expand to cross-modal integration with LLMs, achieving end-to-end intelligent support from images-to-text. The implementation of AI has unlocked unprecedented potential in US image analysis for the diagnosis, screening, detailed analysis, and treatment decisions of various pediatric diseases.

Application of AI in pediatric US

Preliminary diagnosis and disease screening

Integrating AI in pediatric US can improve the accuracy of initial diagnosis and screening and streamline the clinical process. AI effectively overcomes the limitations of traditional ultrasound with DL and image processing technologies, such as high operation dependency, unstable image quality, and insufficient quantitative analysis, while enhancing the early identification of different system diseases and providing the basis for comprehensive cross-system diagnosis and screening. In addition, some studies have explored the application of AI in educational training and report generation, such as using automated tools to improve the consistency of clinical reports. These efforts offer a valuable supplement to the multidimensional integration of artificial intelligence in pediatric US, albeit still in the early stages of development.

Digestive system

Intussusception is a common acute abdomen in the pediatric population, primarily affecting children < 2 years of age [32]. Among the high volume of pediatric abdominal cases requiring emergency surgery, the average annual incidence of intussusception in China is 418.1/100,000 [33], which is significantly higher than the global average of 74/100,000 [34]. Intussusception arises from the intestine telescoping into the intestinal segment beneath. Early diagnosis and intervention are critical to prevent intestinal necrosis and alleviate symptoms in children with intussusception [35]. As a non-invasive and painless diagnostic method, US is readily accepted by pediatric patients and their families [36]. The typical US imaging features of intussusception include the “concentric circle” and the “sleeve” signs [37].

Li et al. proposed an improved Faster R-CNN-based DL model for detecting the “concentric circle” sign in pediatric intussusception US images. The study included 440 pediatric abdominal US images, divided into training and validation sets with an 8:2 ratio with the following values: AUC, 0.986; F1 score, 0.932; specificity, 0.941; and recall, 0.920 [32]. The model not only locates and detects concentric circles, but more importantly the core CNN can automatically extract and quantify key features from US images. For example, by analyzing the multi-layer convolutional feature maps, the model can objectively evaluate the clarity of the concentric circle edges, the contrast in echo intensity between the internal layers, and the relative thickness ratio of these layers. This quantitative analysis of diagnostic features significantly enhances the objectivity and reproducibility of human interpretation of US images, thereby reducing discrepancies in subjective judgment. The results demonstrate that the DL algorithm can effectively identify and quantify key US imaging features, providing reliable technical support for the rapid and objective diagnosis of this condition.

Kim et al. developed a DL model based on the YOLOv5 architecture for diagnosing pediatric ileocolic intussusception and collected 40,765 gray scale US images from two hospitals. The model generates bounding boxes through target detection, identifies typical US features of intussusception, such as the concentric circle sign in axial scanning and the cuff sign in longitudinal or oblique scanning, and quantifies the echo-intensity differences in key regions to assist with establishing the diagnosis. Following testing, two confidence thresholds (CTopt and CTprecision) were set at 0.557 and 0.790, respectively. The accuracy and recall for each image were 0.957 and 0.800 for CTopt, respectively, and 0.984 and 0.443 for CTprecision, respectively, showing the counterbalance between performance metrics [2]. Chen et al. constructed an intelligent diagnostic model for pediatric intussusception based on several DL algorithms (ResNet-152, DenseNet-161, VGG-16, EfficientNet-B7, CIDNet-ResNet-152, CIDNet-DenseNet-161, CIDNet-VGG-16, and CIDNet-EfficientNet-B7). Chen et al. collected 9999 US images to train, modify, and validate the diagnostic models. The model extracted features using the multi-instance deformable Transformer Classification Module (MI-DTC) and gradient-weighted class-activation mapping (Grad-CAM++) techniques. These techniques focused on the hypoechoic region around the bowel in the concentric circle sign and quantified the intensity difference in the hyperechoic rims of the cuff sign, providing objective, quantitative analysis to enhance diagnostic reproducibility. Following verification, the CIDNet-EfficientNet-B7 model showed the best performance with an AUC of 0.9716, specificity of 0.9621, accuracy of 0.8726, and F-Score of 0.8593 [38]. According to these studies, intelligent diagnosis models based on DL perform well in diagnosing pediatric intussusception and have significant potential for application. AI-driven feature recognition not only aids manual diagnosis but also improves objectivity by quantifying echo intensity and edge features, thereby reducing discrepancies in subjective interpretation. Intussusception can be diagnosed as early as possible, even in the case of limited medical resources, thereby reducing the incidence of intestinal perforation.

In addition to intussusception, appendicitis is one of the common causes of acute abdominal pain in the pediatric population [39]. Appendicitis is frequently diagnosed using US in children [40]. The US examination, when performed by experts, demonstrated high visualization rates, accuracy, sensitivity, and specificity [41]. However, not all pediatricians or emergency department physicians perform US exams proficiently. Using US to diagnose appendicitis requires extensive training and skill development [42]. To address the shortage of experienced US experts and facilitate early diagnosis, applying AI technology to assist in US diagnostics can help manage appendicitis and reduce the perforation rate. Hayashi et al. developed an AI model based on the U-Net algorithm for automated US-assisted diagnosis of appendicitis, collecting 6914 US images of appendicitis to train the model. The results showed that AI had excellent performance in detecting superficial appendicitis (depth ≤ 5 cm) and helped increase the diagnostic accuracy of pediatricians from a baseline of 50% to 60% [43]. Based on the these research findings, the AI model can effectively enhance the diagnostic efficiency of US screening for superficial appendicitis in pediatric patients and reduce the risk of missed diagnoses.

Prolonged jaundice in neonates and infants is a common clinical issue faced by pediatricians and pediatric surgeons. The primary causes of neonatal jaundice include infectious, hemolytic, breast milk, and obstructive jaundice, as well as rare autoimmune disorders [44]. Obstructive jaundice often necessitates early surgical intervention. Biliary atresia (BA) iss the leading cause of biliary obstruction in this age group. Therefore, early suspicion, reliable screening tools, and precise diagnostic methods are crucial for timely diagnosis and treatment. While percutaneous liver biopsy and intraoperative cholangiography (IOC) remain the gold standards for diagnosing BA, these invasive techniques carry risks of sampling errors and histopathologic misinterpretation and require specialized medical facilities [45]. In contrast, US has emerged as a valuable, non-invasive diagnostic tool, offering high accuracy, especially in identifying the triangular cord sign and gallbladder anomalies [46]. However, the high diagnostic performance of the US can only be achieved by well-trained pediatric surgeons, pediatricians, and pediatric radiologists. Image quality is highly user-dependent and an inter-observer difference is expected, particularly among primary physicians.

To overcome these challenges, Hsu et al. developed an AI model based on multiple CNNs, including ResNet-101, ResNet-50, ResNet-18, VGG-16, VGG-19, ShuffleNet, GoogleNet, MobileNetV2, and DenseNet-201, which allows for BA screening without the need for human interpretation. The study utilized 1976 original US images from 180 patients with data augmentation increasing the dataset to 21,010 images for model training. Among the various models tested, ShuffleNet exhibited the best performance with an accuracy of 0.905, sensitivity of 0.678, specificity of 0.967, AUC of 0.926, and an F1 score of 0.754 [47]. Therefore, DL model-based pediatric US demonstrates high specificity and diagnostic potential in the non-invasive early screening of BA and is expected to become a powerful auxiliary tool in clinical work.

In addition to primary gastrointestinal diseases, complications arising from pediatric enteral nutrition support techniques require careful clinical attention. Percutaneous endoscopic gastrostomy (PEG) is a well-established and safe method for long-term enteral feeding in pediatric patients with malnutrition or dysphagia [48, 49]. A significant long-term complication of PEG is buried bumper syndrome (BBS), characterized by gastric mucosal overgrowth over the internal fixation plate. This condition can lead to severe complications, including peritonitis and gastric perforation [50, 51]. Timely diagnosis and prompt endoscopic or surgical PEG replacement are essential to prevent these complications [52]. The current recommendations for diagnosing BBS include contrast studies via the PEG or upper endoscopy [53]. Computed tomography (CT) may provide additional detailed imaging information [54] but the risk of ionizing radiation needs to be considered for pediatric patients. US has emerged as a valuable, radiation-free alternative. Conventional B-mode US, a standard tool for evaluating gastrointestinal diseases, allows visualization of the gastric wall layers and can help localize the internal fixation plate in BBS, while the needs for manpower limits widespread application [55]. Aguilar et al. assessed a logistic regression-based ML algorithm for BBS diagnosis using US data from 82 pediatric patients with different PEG configurations. The model achieved high diagnostic performance, with precision, recall, and F1 scores of 0.88, supporting a role in early BBS detection and minimizing unnecessary invasive procedures [56].

In summary, AI-based pediatric US demonstrated good performance in diagnosing digestive system diseases, is closely aligned with clinical practice, and shows promise as a powerful auxiliary tool in clinical practice.

Respiratory system

The integration of AI with LUS is gradually breaking through the traditional diagnostic model of pediatric respiratory diseases. Pneumonia is a leading cause of morbidity and mortality, accounting for 14% of all deaths in children < 5 years of age in 2019 [57]. A chest radiograph (CXR) is currently the gold standard for evaluating suspected pneumonia or complications of pneumonia [58]. However, there are limitations to using CXR in children, including the radiation exposure and high cost [22]. In contrast, US is a non-ionizing, cost-effective imaging modality that can provide diagnostic accuracy comparable to or surpassing CXR for pediatric pneumonia [59, 60]. However, the main limitation of US is a dependence on operator experience in acquiring and interpreting images, which may affect the diagnostic accuracy.

Use of standardized imaging protocols to regulate data acquisition and the combination of AI for image interpretation is expected to overcome to address the issue of operator experience in the diagnostic process of conventional US, thus improving diagnostic accuracy and operability in different environments [61]. Correa et al. constructed an automatic classification algorithm for pneumonia in children based on pattern recognition of luminance distribution in LUS images and a standard neural network, which was used to automatically differentiate between pneumonia infiltration and normal lung tissue. Correa et al. collected 60 US images from 21 pediatric patients for training and testing. The algorithm achieved a sensitivity of 0.909 and a specificity of 1.000 with performance comparable to that of traditional US physicians (sensitivity, 0.90; and specificity, 0.985). Furthermore, automatic pleural line identification significantly reduced dependence on the experience of the operator [62]. Nti et al. developed an AI-augmented pleural scanning system for POCUS, which helped assess the diagnostic competence of novice learners (NLs) in identifying pneumonia. The study involved 32 pediatric emergency patients and 7 NLs using AI-assisted equipment. The results showed a sensitivity of 0.667, specificity of 0.965, and accuracy of 0.937 for AI-assisted diagnosis by NLs [63]. These studies have demonstrated the significant potential of AI to improve the sensitivity, specificity, and accuracy of diagnostic LUS, especially in supporting NLs and standardizing practices.

Recent technological breakthroughs of AI-enabled LUS have focused on solid lung lesions, a core sign characterizing the severity of pneumonia. Kessler et al. developed a DL algorithm for detecting lung consolidation in pediatric pneumonia using a hand-held US system. By incorporating data from 107 pediatric patients and augmenting training with 44 adult pneumonia cases, Kessler et al. achieved an accuracy of 0.885, sensitivity of 0.880, specificity of 0.890, and an intersection over union (IoU) of 0.6 [57]. This approach addressed the challenge of limited pediatric data.

Beyond diagnostic performance, another promising dimension of AI lies in the potential role in medical education and training. Studies have shown that AI-based virtual simulation systems and simulators can offer young physicians real-time guidance during procedures, automated skill assessments, and personalized feedback, all of which contribute to improved learning efficiency and operational standardisation. By dynamically annotating anatomic structures, optimising probe paths, and comparing to expert standards, AI training platforms can accelerate skill development in trainees while reducing the burden on instructors [64, 65]. These efforts present promising ideas for the future of talent development and technological advancement in pediatric US, albeit in the early stages.

In parallel, the automated support for pneumonia diagnosis is particularly valuable in resource-constrained areas. However, single-frame image analysis faces limitations in capturing dynamic features, like B-line distribution and lung sliding, in which traditional CNNs may be less effective. To overcome this, Khan et al. introduced the Fused LUS-Encoded Transformer (FLUEnT) framework, which was trained on 2400 video clips from 200 pediatric patients. FLUEnT achieved a sensitivity of 0.838 and a specificity of 0.942 in video classification and a sensitivity of 0.973 and specificity of 0.833 in lung consolidation screening. The results suggest that the FLUEnT may provide a pediatric screening tool of pneumonia in resource-limited areas [66].

Aujla et al. proposed a ML-based model incorporating clinical features for automated diagnosis of six lung diseases, including pneumonia-associated pulmonary solid lesions. The study used 24,231 LUS images and 288 videos data, which were classified by extracting recursive quantitative analysis (RQA) features from vertical and horizontal scan lines. The clinical features included gestational age, age at scanning, and number of days of life. Gestational age is a key variable that is particularly useful in distinguishing the unique lung patterns of preterm infants (e.g., the white lung feature in respiratory distress syndrome [RDS] or the abnormal echoes that occur in chronic lung disease [CLD]). Gestational age is useful because the structural immaturity of the lungs in preterm infants leads to significant variability in recurring features (e.g., B-line fusion or pleural line thickening) on LUS images. Integrating gestational age into diagnostic models improves performance by quantifying the correlation between lung developmental status and US morphology, thereby enhancing classification accuracy for disease groups, such as RDS, consolidated lung changes (CON), and CLD. The linear discriminant analysis model combined with clinical features, such as gestational age, had a classification accuracy of 0.776 [67].

In summary, pediatric US based on AI models performs well in diagnosing and classifying respiratory diseases in children. Pediatric US can provide an early screening tool in primary care scenarios where there is a lack of experienced radiologists.

Skeletal system

Distal radius fractures account for approximately 25% of all pediatric fractures [68] and are usually caused by falls onto an outstretched hand that often involves the metaphysis or growth plate. Fracture patterns vary by injury location, directly affecting treatment strategies [69]. Radiography remains the standard diagnostic tool. However, each wrist X-ray exposes children to approximately 1uSv of radiation [70]. Although 1uSv is significantly lower than the average annual environmental radiation exposure (443uSv in Canada), even minimal radiation poses an increased risk to children, whose rapidly growing cells heighten susceptibility to radiation-induced malignancies by 10%–15% compared to adults [71]. Despite being widely available and cost-effective, US remains underutilized for fracture detection. US has proven to be effective in identifying cortical disruptions and joint effusions with diagnostic accuracy comparable to radiographs for distal radius fractures [72, 73]. 3DUS further enhances anatomic visualization by capturing volumetric data through high-quality video sweeps, offering a more comprehensive depiction of fracture anatomy than conventional 2DUS images. However, interpreting 3DUS requires considerable expertise due to the complexity and volume of data.

Zhang et al. developed an AI system combining 3DUS and CNN to detect pediatric wrist fractures and overcome the limitations of manual interpretation. Zhang et al. collected 3DUS images from 30 children to test and validate the diagnostic system. The experimental results showed that the system had a specificity of 0.89 and the kappa consistency coefficient between the diagnosis results of the system and physicians was 0.48–0.74, which indicates that the system offers practical application significance [72]. Building upon this success, Knight et al. expanded the sample size and incorporated portable US, developing an AI model based on ResNet34 and DenseNet121 architectures for diagnosing pediatric distal radius fractures in 2DUS and 3DUS images. The DenseNet121 model had the highest sensitivity in 2DUS and 3DUS relative to ResNet34 and the expert ultrasonographer (0.91 and 1.00, respectively). These studies showed that AI diagnostic performance is in high agreement with experts and can be used as an adjunctive diagnostic tool for distal radius fractures, significantly reducing specialist workload and improving efficiency. AI also provides a more cost-effective solution for clinical rollout [8].

With the successful use of AI in the immediate diagnosis of acute trauma, researchers are further exploring the potential in precise monitoring of chronic developmental abnormalities. Developmental dysplasia of the hip (DDH) is a common bone and joint disorder in infants that encompasses a spectrum of abnormalities, including hip dislocation, subluxation, and dysplasia with or without instability [74]. Early diagnosis of DDH has been shown to significantly reduce the need for surgery, prevent complications, and improve patient outcomes. While diagnostic modalities, like X-rays, CT scans, and MRI, are available, US remains the preferred method due to its safety profile [75]. However, the accuracy of hip US can vary based on image quality and patient factors, such as age, height, and weight. To address this challenge, Chen et al. constructed a DL model based on a long short-term memory (LSTM) network that evaluates the applicability of DDH ultrasonography by integrating personal information, such as gender, age, height, and weight of infants. Chen et al. collected 290 infant left hip US images for training, optimizing, and validating the model. The intraclass correlation coefficients (ICCs) for the α- and β-angles between AI and manual measurements were 0.98 and 0.93, respectively. This model provides a quantitative basis for clinical selection of the timing of US examinations for DDH, which in turn helps to improve the diagnostic accuracy of DDH [76]. Building on the versatility of 3DUS in musculoskeletal diagnosis, which includes hip and hand bone analysis through automated volumetric scanning with AI segmentation, Chen et al. developed a radiation-free bone age assessment model. This model utilizes convolutional neural networks to identify and segment bone structures in 3DUS images of the hand. The study introduced a novel automated scanning device with a multi-stage AI processing workflow, marking the first application of AI-driven 3DUS technology in bone age assessment. This innovation provides an effective solution to mitigate the risks of X-ray radiation exposure in children [77].

The introduction of AI in scoliosis monitoring also provides innovative solutions to improve diagnostic accuracy and reduce radiation exposure in complex deformities. Scoliosis is a common spinal deformity in adolescents, affecting 2%–3% of adolescents, of which approximately 10% worsen and require treatment [78]. Early detection is critical in managing curve progression with orthotic treatment and minimizing the need for surgical intervention [79]. Traditional scoliosis monitoring relies heavily on X-rays, raising concerns about cumulative radiation exposure [80]. While US offers a safer alternative, the US utility has been limited by image quality and acoustic artifacts [5].

Advances in AI-based segmentation have renewed interest in US for scoliosis detection. Foroughi et al. initially proposed dynamic programming-based segmentation methods [81], and their work was followed by studies from Berton et al., who explored image processing techniques [82]. In recent years CNNs have made significant progress in image processing and can effectively capture complex image patterns [83]. The application of CNNs for real-time bone segmentation and volume reconstruction in spine scanning shows excellent promise. Ungi et al. constructed a U-Net-based automatic spinal US segmentation model for 3D visualization and measurement of scoliosis. Ungi et al. collected 5182 ultrasound images for training and testing. The results showed that the recall, precision, and AUROC values of the model were 0.966, 0.059, and 0.970, respectively. The model enabled 3D reconstruction of the spine with clinically acceptable angular accuracy (average deviation, 2.0°; and maximum deviation, 2.2°) within 1 min, providing a rapid and radiation-free alternative for scoliosis screening [84]. The CNN-based pediatric US diagnostic model performs well in screening scoliosis and has significant potential for application. In conclusion, combining AI and 3DUS offers innovative solutions for diagnosing and monitoring pediatric skeletal diseases.

Urinary system

Renal abnormalities are a significant diagnostic focus in pediatric medicine with US screening being an effective tool for detecting “silent” renal anomalies, thus enabling early diagnosis and intervention [85]. A study involving > 1 million school-aged children identified several common renal abnormalities via US, including hydronephrosis (39.6%), unilateral small kidney (19.8%), unilateral renal agenesis (15.9%), and cystic diseases (13.9%) [86]. Among these abnormalities, hydronephrosis, which is characterized by dilation of the renal collecting system, is one of the most prevalent pediatric urologic disorders. Accurate identification of hydronephrosis is crucial for clinical management because failure to diagnose hydronephrosis early can lead to complications, such as urinary tract infections, sepsis, and progressive renal impairment [87]. Despite the utility of US, current diagnostic methods still face limitations in quantitative analysis due to inherent image noise, low contrast, and the subjective nature of image interpretation [88, 89].

To address these challenges, recent advances in AI have driven the transition from semi-automated to fully automated image segmentation and quantitative measurement of lesion areas. Mendoza et al. developed a novel automated segmentation approach for pediatric renal US, combining an improved active shape model (ASM) framework with graph-cuts (GCs) for calculating the hydronephrosis index (HI). Mendoza et al. collected 34 pediatric renal US images for model training, refinement, and validation. The refined ASM and GC methods achieved optimal segmentation performance with dice coefficients (DCs) of 0.87 ± 0.05 for kidney segmentation and 0.62 ± 0.32 for hydronephrosis detection. In addition, the automatically calculated HI exhibited a strong correlation with manual measurements with an inter-operator correlation coefficient of 0.99, demonstrating comparable reliability to manual segmentation in low signal-to-noise US images. This approach provides a reliable and efficient tool for quantitatively assessing pediatric hydronephrosis [90].

To overcome the limitations of single 2DUS sagittal slice segmentation for HI computation, Cerrolaza et al. further proposed a framework combining Gabor-based appearance models (GAMs) and optimized GC algorithms for 3DUS images of kidneys in children and renal collecting system (CS) segmentation. The framework was validated using 3DUS data from 8 pediatric patients. GAM achieved a DC of 0.86 ± 0.04 for kidney segmentation and GC yielded a DC of 0.62 ± 0.19 for CS segmentation, particularly excelling in low-contrast images. The framework also significantly reduced the 3D HI estimation error from 32% to 2.5%, underscoring the importance of 3DUS for diagnosing pediatric hydronephrosis [91]. Cerrolaza et al. further optimized the model by incorporating a Gabor-based fuzzy appearance model (FAM) and patient-specific alpha shapes for 3DUS renal segmentation. Trained and validated on 39 pediatric 3DUS images, the optimized model demonstrated a DC of 0.86 ± 0.05 for kidney segmentation and 0.74 ± 0.10 for CS segmentation. FAM significantly outperformed the classical ASM, achieving a correlation coefficient 0.92 with expert assessments. This approach allows for fully automated, rapid segmentation of the renal collecting system, significantly improving diagnostic efficiency and making FAM especially suitable for diagnosing and evaluating pediatric hydronephrosis [92].

Based on the further development of this technique, Tsai et al. broke through the limitations of traditional models and developed a DL model based on ResNet-50 and migration learning for automated screening of pediatric renal US abnormalities. After training and testing on 1926 US images, the model achieved an overall accuracy of 0.929 with an AUC value of 0.959. The accuracy for detecting hydronephrosis and kidney stones was 0.941 and 0.932, respectively. These findings demonstrate the high clinical potential of the model, especially in detecting hydronephrosis and nephrolithiasis [93]. In conclusion, the rapid development of AI in US image segmentation and applications has provided a powerful tool for diagnosing abnormal pediatric urologic diseases, significantly improving the efficiency and reliability of clinical evaluation.

Cardiovascular system

Congenital heart disease (CHD) is a structural malformation caused by abnormal heart development, which results in cardiac dysfunction with clinical manifestations of hemodynamic disturbances and even organ failure. As one of the leading causes of congenital disabilities in neonates, CHD has a global incidence of approximately 1% and contributes to 3% of infant mortality, making CHD a significant risk factor for neonatal death [94]. Clinical screening tools, including medical history review, auscultation, chest radiography, and hyperoxia testing, lack sufficient sensitivity in non-specialized healthcare settings, particularly for subtle defects like coarctation of the aorta (CoA), which accounts for 6%–8% of CHD cases. CoA, resulting in severe circulatory disturbances due to aortic arch narrowing, is one of the most life-threatening conditions in neonates [95]. US has become an essential tool for prenatal screening and postnatal diagnosis of CHD due to its portability, ease of use, and radiation-free properties. However, the traditional manual interpretation of US images heavily relies on clinician experience, leading to subjective bias, especially in mild anatomic variations or complex malformations.

In recent years ML methods have been widely applied in echocardiography, including 2D cardiac view classification, cardiac boundary segmentation, parameter quantification, and 3D reconstruction [96, 97]. Among these methods, DL has shown significant potential in detecting complex malformations. To address challenges in detecting CoA, Pereira et al. proposed a DL framework integrating a stacked denoising autoencoder (SDAE) and a support vector machine (SVM) for automated CoA detection in neonatal echocardiograms. Pereira et al. included 26 patients with CoA and 64 healthy neonates, integrating multi-view US features, such as parasternal long axis (PSLAX) and apical four-chamber (AC4). The results showed a sensitivity of 0.885 and specificity of 0.864, significantly outperforming traditional methods, like pulse oximetry. Model performance was directly validated against the gold standard of echocardiography. Using the PSLAX+AC4 dual-view combination strategy, the overall error rate was reduced to 6.7% (95% CI: 6.1%–7.6%) with a false negative rate of only 3.8% (2.4%–6.7%) and a false positive rate of 7.8% (7.0%–9.0%). This approach significantly outperformed the indirect method of pulse oximetry combined with clinical assessment, which had a sensitivity of 71%. The above DL-based intelligent pediatric US diagnostic model performs well in CHD diagnosis and is expected to be a powerful auxiliary tool in clinical work [98].

Nervous system

Germinal matrix hemorrhage (GMH) and periventricular intraventricular hemorrhage (IVH) are the most common types of intracranial hemorrhage in preterm infants, typically occurring within the first few days after birth [99]. Early detection of GMH is crucial for managing complications and preventing further complications. Due to the high sensitivity and lack of ionizing radiation, cranial US remains the preferred imaging modality for assessing GMH/IVH [100]. DL has the potential to improve early identification of intracranial abnormalities in preterm infants, a critical period for brain development and neuroprotection [101]. Before the widespread use of DL in medical imaging, studies have used clinical data, such as Apgar scores and arterial blood gas analysis, to develop artificial neural networks to predict severe IVH (grades III-IV) [102]. Other research has explored adult head CT or neonatal US for hemorrhage detection [103, 104] but none specifically addressed GMH detection. To bridge this gap, Kim et al. developed a convolutional CNN based on transfer learning for the automated detection of GMH in cranial US images. The study included 400 preterm infants with US images used for training, validation, and testing. The model showed strong performance, achieving an AUC of 0.92, sensitivity of 0.82, and specificity of 0.90. Utilizing small-sample data augmentation, this approach provides a reliable method for early diagnosis and risk assessment of GMH, offering significant clinical value for managing preterm infants [105]. These results of these studies are summarized in Table 1.

Table 1 Summary of the Application of AI Models for Preliminary Diagnosis and Disease Screening in Pediatric Ultrasound

| Human organ Systems | Diseases | Subject | AI Algorithms | Number of Samples | Reported Performance | Ref. |

|---|---|---|---|---|---|---|

| Digestive system | Intussusception | Diagnosis | Faster R-CNN |

440 pediatric patients | AUC = 0.986, F1 score = 0.932, Recall = 0.920, Specificity = 0.941 |

[32] |

| Ileocolonic intussusception | Diagnosis | YOLOv5x | 40,765 us images | CTopt: Precision = 0.957, CTopt: Recall = 0.800, CTprecision: Precision = 0.984, CTprecision: Recall = 0.443 |

[2] | |

| Intussusception | Diagnosis | ResNet-152, DenseNet-161, VGG-16, EfficientNet-B7, CIDNet-ResNet-152, CIDNet-DensNet-161, CIDNet-VGG-16, CIDNet-EfficientNet-B7 | 9999 us images | AUC = 0.9716, Sensitivity = 0.9621, Specificity = 0.9834, Accuracy = 0.8726, F score = 0.8593 |

[38] | |

| Appendicitis | Diagnosis | U-Net | 6914 us images | AI-enhanced pediatric diagnosis accuracy from 50%–60% |

[43] | |

| Biliary atresia | Screening | ResNet-101, ResNet-50, ResNet-18, VGG-16, VGG-19, Shuffle Net, GoogleNet, MobileNetV2, DenseNet-201 |

1976 us images | AUC of ShuffleNet = 0.926, Accuracy of ShuffleNet = 0.905, Sensitivity of ShuffleNet = 0.967, F1 score of ShuffleNet = 0.754 |

[47] | |

| Buried bumper syndrome | Diagnosis | Logistic regression | 82 pediatric patients | Recall = 0.88, F1 scores = 0.88 |

[56] | |

| Respiratory system | Pneumonia | Diagnosis | ANN | 21 pediatric patients | Sensitivity = 0.909, Specificity = 1.000 |

[62] |

| Pneumonia (lung consolidation) | Diagnosis | DL | 107 pediatric patients and 44 adult patients |

Accuracy = 0.885, Sensitivity = 0.880, Specificity = 0.890, IoU = 0.62 |

[57] | |

| Pneumonia (lung consolidation) | Detection | FLUEnT | 2400 videos | Sensitivity = 0.973, Specificity = 0.833 |

[57, 66] | |

| Pneumonia (lung consolidation) | Classification | ML | 24,231 us images and 288 video data | Accuracy = 0.776 | [67] | |

| Skeletal system | Distal radius fractures | Diagnosis | CNN | 30 pediatric patients | Specificity = 0.89 Kappa = 0.48–0.74 |

[72] |

| Distal radius fractures | Diagnosis | DenseNet121 | 122 pediatric patients | 2DUS: Sensitivity = 0.91 3DUS: Sensitivity = 1.00 |

[8] | |

| Developmental dysplasia of the hip | Diagnosis | DL | 290 us images | α angles: ICC = 0.98 β angles: ICC = 0.93 |

[76] | |

| Scoliosis | Diagnosis | U-Net | 5182 us images | Recall = 0.966 Precision = 0.059 AUROC = 0.970 |

[84] | |

| Urinary system | Hydronephrosis | Detection | ASM | 34 us images | DC (kidney) = 0.87 ± 0.05, DC (hydronephrosis) = 0.62 ± 0.32 |

[90] |

| Hydronephrosis | Diagnosis | GAM, GC | 8 pediatric patients |

GAM: DC (kidney) = 0.86 ± 0.04, GC: DC (collecting system) = 0.62 ± 0.19 |

[91] | |

| Hydronephrosis | Diagnosis | FAM | 39 3DUS images |

DC (kidney) = 0.86 ± 0.05, DC (hydronephrosis) = 0.74 ± 0.10, Correlation coefficient = 0.92 |

[92] | |

| Hydronephrosis | Diagnosis | ResNet-50, Migration learning | 1926 us images | Accuracy = 0.941 | [93] | |

| Kidney stones | Diagnosis | ResNet-50, Migration learning | 1926 us images | Accuracy = 0.932 | [93] | |

| Cardiovascular system | Coarctation of aorta | Detection | SDAE, SVM | 26 pediatric patients | Sensitivity = 0.885, Specificity = 0.864 |

[105] |

AI-driven detailed analysis and decision support in pediatric US

The integration of AI has significantly enhanced diagnostic accuracy and prognostic prediction across various systems and provided clinicians with comprehensive decision support. Furthermore, some exploratory studies have sought to combine quantitative results from DL models on anatomic structures, functional parameters, and tissue characteristics with automated report generation.

Digestive system

Acute appendicitis is one of the most common causes of emergency surgery in children and adolescents. While appendectomy remains the gold standard treatment, conservative management strategies for uncomplicated appendicitis have received increasing attention [106]. However, due to the non-specific nature of symptoms and signs, traditional diagnostic methods often rely heavily on expert interpretation, leading to diagnostic uncertainty [107]. In recent years AI has demonstrated considerable potential in enhancing diagnostic accuracy and supporting therapeutic decision-making, particularly through multimodal data integration and developing interpretable models. Reismann et al. developed a supervised learning-based biomarker diagnostic model for classifying the severity of acute appendicitis in children. Data from 590 patients, including complete blood counts, C-reactive protein (CRP) levels, and US-measured appendiceal diameters, were used for model training and validation. The results showed an accuracy of 0.51, a sensitivity of 0.95, a specificity of 0.33, and an AUC of 0.80 in distinguishing complicated from uncomplicated appendicitis. Although the accuracy was modest, the high sensitivity of the model effectively prioritized identifying complex cases requiring surgical intervention, thus reducing unnecessary appendectomies [108].

In addition to multimodal data integration, the interpretability of AI models has emerged as a key focus for improving clinical decision support. Marcinkevies et al. proposed an interpretable ML model, the multi-view concept bottleneck model (MVCBM), and a semi-supervised extension of the MVCBM (SSMVCBM), to predict the diagnosis, treatment, and severity of pediatric appendicitis based on US images. The model was trained and validated on 1709 US scans combined with clinical data. The results showed that the extended MVCBM achieved an AUROC of 0.80 and an AUPR of 0.93 for predictive diagnosis. Thus, AI can assist in identifying complex cases and severity stratification of acute appendicitis and help clinicians manage diagnostic uncertainty with treatment decision optimization, leading to individualized treatment plans based on risk grading [109].

AI is also being explored for the automated generation of clinical reports to further optimize the diagnosis and treatment process for appendicitis. Traditional manual reporting is not only time-consuming but also subjective. AI frameworks can analyze US images, automatically extract key image-clinical elements, and generate structured, standardized reports, thereby significantly improving consistency and efficiency [110]. Notably, the introduction of LLMs has further expanded this potential. In the task of grading and structuring US images for pediatric appendicitis, tools such as ChatGPT-4, have outperformed manual information extraction in terms of accuracy, generating reports approximately 40 times faster and significantly reducing the repetitive documentation burden on clinicians [111]. The results of this study demonstrated that AI has a key role not only in diagnostic decision-making but also in facilitating standardization and process re-engineering in post-diagnostic informatics, benefiting the entire management process for acute appendicitis.

In addition to appendicitis, AI has shown substantial potential in diagnosing other acute abdominal conditions. Intussusception, particularly the ileocolic type, which is a common pediatric emergency, typically necessitates prompt US diagnosis. However, manual diagnosis demands extensive expertise, especially when evaluating disease severity and determining surgical indications, like thickened adenomatous bowel walls, pneumoperitoneum, and peritonitis. Although DL methods can extract pathologic features from US videos for diagnosis, challenges persist in algorithmic speed, accounting for surgical indications, and the opacity of DL decision-making processes [112]. To address these issues, Pei et al. developed a modified YOLOv5 model that enables real-time diagnosis of pediatric intussusception based on the “concentric circle” and “sleeve” signs, evaluates severity, and provides surgical indications. Pei et al. collected data from 184 US videos to validate the model, which showed that the average AUC for distinguishing between no intussusception, non-surgical, and surgical cases was 0.956. Clinical validation demonstrated that AI-assist improved the diagnostic AUCs of junior ultrasonographers from 0.857–0.966, which is close to the level of senior physicians, and the scanning time was shortened from 9.46–3.66 min. This study confirmed that a DL-driven real-time navigation system can significantly improve diagnostic efficiency and optimize clinical decision-making, particularly in emergency and resource-limited settings [113].

In addition, AI quantitative precision offers distinct advantages for chronic inflammatory diseases requiring long-term monitoring. Kumaralingam et al. developed a nnU-Net-based model to automatically measure bowel wall thickness (BWT) in pediatric inflammatory bowel disease (IBD) patients, addressing the challenge of assessing transmural lesions in children with thinner bowel walls. The study used 4565 intestinal US images for training and validation and the results showed that the model demonstrated optimal performance at a BWT threshold of 2 mm with a sensitivity of 0.9029 and a specificity of 0.9370 [114]. In conclusion, integrating AI in pediatric US examinations of digestive diseases optimizes the diagnostic workflow. AI-assisted US examinations provide real-time, accurate support for management and treatment decisions for complex diseases.

Respiratory system

Pneumonia, as the leading cause of infant mortality worldwide, requires early diagnosis for a better prognosis [115]. LUS is emerging as an essential tool for assessing respiratory distress in children due to the advantages of being radiation-free and bedside operable [116]. However, there is poor imaging quality due to noise and artifacts and high inter- and intra-observer variability between US systems produced by different institutions and manufacturers. To address these challenges, developing advanced automated US image analysis methods is crucial to enhance the objectivity, accuracy, and intelligence of LUS-based diagnosis, evaluation, and image-guided interventions. Magrelli et al. developed an LUS image analysis system based on several deep convolutional neural networks (VGG19, Xception, Inception-v3, and Inception-ResNet-v2) to precisely classify pediatric pulmonary diseases. Magrelli et al. used 9000 validated LUS images for training, testing, and validating the model. Among the models, Inception-v3 performed best in classifying healthy cases, bronchiolitis, and bacterial pneumonia, achieving an accuracy of 0.915, sensitivity of 0.915, and specificity of 0.959. This study demonstrated that a DL-based intelligent classification model performs well in accurately classifying pediatric lung diseases by extracting subtle US features in LUS, providing high-precision decision support for identifying pneumonia in children [117].

In addition, preterm infants often have respiratory disorders due to immature respiratory development with RDS and transient tachypnea of the newborn (TTN) being the most common [118]. Monitoring changes in respiratory status is crucial for personalized treatment. LUS, a radiation-free, cost-effective bedside imaging technique, is increasingly used in neonatal intensive care units [9]. AI enables automated interpretation of LUS images to assist clinicians in establishing diagnoses. Gravina et al. developed an automated neonatal pulmonary respiratory status assessment system using DL models (ResNet34 and EfficientNet-B0/B1/B2). An analysis of US videos from 86 neonates demonstrated that ResNet34 outperformed traditional texture analysis methods in classification tasks, achieving a Spearman correlation coefficient of 0.782 and an accuracy of 0.872 with clinical reference parameters (SpO2/FiO2) [119]. AI performs well in the quantitative analysis of neonatal lung function, which helps physicians take the next step in decision-making for treatment.

However, such studies have been limited by small sample sizes, underutilized advanced DL strategies, and a lack of standardized image acquisition and scoring systems [120]. These limitations have led to subjective interpretations and reduced model generalizability. Fatima et al. constructed two DL-based strategies to enhance clinical decision-making and improve patient outcomes, including a frame-to-video level method (F2V-FT) and direct video (DV) classification for severity assessment and decision support of neonatal RDS and TTN. Fatima et al. collected LUS data from 34 newborns for model training and testing. The F2V-FT strategy had 0.77 video classification accuracy and a kappa consistency coefficient of 0.52 with the manual assessment results using the ResNet-18 model. The DV strategy directly analyzed the spatio-temporal features of the video by using the TranSLUCEnT model and had a video classification accuracy of 0.72 with a kappa consistency coefficient of 0.62 [121].

In addition, Perri et al. developed a CNN-based model for predicting the need for nasal continuous positive airway pressure (CPAP) and surfactant therapy in neonates. The model, trained on 986 LUS images, achieved an image-level classification accuracy of 0.82. The model achieved an AUROC of 0.88 (sensitivity, 0.727; specificity, 0.828; and accuracy, 0.77) for CPAP requirement prediction at timepoint T0. The AUROC was 0.84 (sensitivity, 0.692; specificity, 0.898; accuracy, 0.86) for surfactant therapy prediction [122]. In conclusion, AI models achieved satisfactory results in predicting the evolution of respiratory disease severity and assessing therapeutic needs in neonates. Therefore, such models can be used to develop precise respiratory support strategies for preterm infants.

Skeletal system

DDH is a major type of hip joint abnormality in infants, for which early screening is critical to improve prognosis [123]. US has become the preferred method for neonatal DDH screening compared to the limitations of physical examinations and X-rays due to high-resolution imaging of subtle anatomic structures. Although the standardized US measurement system proposed by Graf in the 1980s, which is based on three landmark lines and α/β angle measurements within a specific plane, has been widely used for decades [124]. The accuracy of the standardized US measurement system depends heavily on operator expertise with significant subjective variability in identifying key anatomic landmarks, such as labrum positioning. To address this limitation, Sezer et al. developed a CNN-based automated classification system of neonatal hip US images for accurate typing of DDH. Sezer et al. used 675 US images to train and test the model. The model, enhanced by OBNLM denoising, achieved a classification accuracy of 0.977 with a sensitivity of 0.9617 and specificity of 0.9802 [125]. This study demonstrated that AI exhibits high accuracy and exceptional performance in differentiating DDH subclassifications, providing a high-precision automated analysis tool for clinical decision support.

Urinary system

US is the primary imaging modality for pediatric hydronephrosis but the clinical utility is limited by subjective interpretation and a lack of strong correlation with functional imaging techniques [126]. Cerrolaza et al. developed a quantitative US analysis system based on SVM and logistic regression (LOG) models to predict the need for diuretic renal scintigraphy in children with hydronephrosis and improve diagnostic accuracy. The system was trained on US images from 50 pediatric patients and validated across different thresholds. At thresholds of 20 min, the system achieved a specificity of 0.94 and an AUC of 0.98, significantly outperforming the traditional SFU grading system and the hydronephrosis severity index. This method also reduced unnecessary diuretic renal scintigraphy by 62%, while ensuring that no severe obstruction cases were missed [127]. In addition, Sloan et al. developed a radionics-based ML model using SVM to distinguish high-grade from low-grade hydronephrosis based on 592 US images. The model, which utilized texture and morphologic feature extraction, achieved an AUC of 0.86 with a sensitivity of 0.757, specificity of 0.863, and an accuracy of 0.837 [128]. These AI models demonstrated strong performance in US-based hydronephrosis grading, assisting in clinical decision-making, minimizing invasive procedures, and optimizing the timing of surgical interventions.

Nervous system

Periventricular leukomalacia (PVL), a significant predictor of cerebral palsy in preterm infants, necessitates early detection for effective parental counseling and timely rehabilitation planning [129]. Cystic PVL is easily identifiable via cranial ultrasound (cUS) but identifying periventricular echogenicity (PVE), the pre-stage of PVL, remains challenging due to inherent subjectivity in US interpretation and variability in equipment settings and operator experience [130]. While PVE is typically transient and does not generally pose a risk for neurodevelopmental impairments, PVE may occasionally progress to PVL [131]. To address these limitations, Jung et al. developed a quantitative method using sequential cUS texture analysis for early PVL prediction in preterm infants. Twenty preterm infants were included in the study and texture features of periventricular echogenic regions were analyzed with MaZda software. The method successfully differentiated PVL from non-progressive PVE, achieving an AUC of 0.820 and a specificity of 0.60 [3]. The finding suggests that AI-based models can effectively assist in early PVL prediction, offering clinicians reliable decision support to optimize treatment strategies and rehabilitation timing.

AI models can achieve satisfactory results in finely analyzing and predicting disease progression in different systems. Therefore, these models can be used to develop effective treatment plans for pediatric patients with different systems. The abovementioned studies are summarized in Table 2.

Table 2 Summary of the Application of AI Models for Detailed Analysis and Decision Support of Pediatric Diseases

| Human Organ Systems | Diseases | Subject | AI Algorithms | Number of Samples | Reported Performance | Ref |

|---|---|---|---|---|---|---|

| Digestive system | Acute appendicitis | Classification | ML | 590 pediatric patients | Accuracy = 0.51, Sensitivity = 0.95, Specificity = 0.33, AUC = 0.80 |

[108] |

| Acute appendicitis | Prediction | ML | 1709 ultrasound images | AUROC = 0.80, AUPR = 0.92 |

[109] | |

| Intussusception | Classification | Modified YOLOv5 | 184 ultrasound videos | Average AUC = 0.956 | [113] | |

| Inflammatory bowel disease | Assessment | nnU-Net | 4565 ultrasound images | Sensitivity = 0.9029, Specificity = 0.9370 |

[114] | |

| Respiratory system | Pneumonia | Classification | VGG19, Xception, Inception-v3, and Inception-ResNet-v2 | 9000 ultrasound images | Accuracy of Inception-v3 = 0.915, Sensitivity of Inception-v3 = 0.915, Specificity of Inception-v3 = 0.959 |

[117] |

| Respiratory distress syndrome, Transient tachypnea of the newborn |

Classification | ResNet-18, TranSLUCEnT |

34 pediatric patients | ResNet-18: Accuracy = 0.77, Kappa = 0.52 TranSLUCEnT: Accuracy = 0.72, Kappa = 0.62 |

[121] | |

| Respiratory distress syndrome | Prediction | CNN | 986 ultrasound images | AUROC = 0.88, Sensitivity = 0.727, Specificity = 0.828, Accuracy = 0.77 |

[122] | |

| Skeletal system | Developmental dysplasia of the hip | Classification | CNN | 675 ultrasound images | Accuracy = 0.977, Sensitivity = 0.9617, Specificity = 0.9802 |

[125] |

| Urinary system | Hydronephrosis | Classification | SVM, LOG |

50 pediatric patients | Specificity = 0.94, AUC = 0.98 |

[127] |

| Hydronephrosis | Classification | SVM | 592 ultrasound images | AUC = 0.860, Sensitivity = 0.757, Specificity = 0.863, Accuracy = 0.837 |

[128] | |

| Nervous system | Periventricular leukomalacia | Prediction | TA | 20 pediatric patients | AUC = 0.820, Specificity = 0.60 |

[3] |

Discussion

Technical advancement

In summary, various systems exhibit notable common technical characteristics in AI-based fundamental diagnosis and disease screening for pediatric US. First, DL is widely adopted as the core architecture owing to high efficiency in identifying local anatomic structures and spatial patterns. CNNs and CNN variants demonstrate exceptional feature extraction capabilities across diverse applications, such as detecting intussusception in the digestive system, classifying pneumonia in the respiratory system, and identifying fractures in the skeletal system. Second, these systems overcome the limitations of static US image analysis by leveraging temporal modeling techniques to process dynamic imaging data. This approach effectively reduces the interference caused by motion artifacts and offers innovative solutions for real-time diagnosis. Third, systems have widely adopted migration learning and data enhancement strategies to address the issue of data sparsity in pediatric applications. These methods significantly enhance model generalizability, mitigate the challenges posed by limited data, and ultimately improve diagnostic accuracy and reliability. Building on these shared technical features, a cross-system intelligent pediatric US diagnostic framework is expected to be constructed to achieve more accurate and personalized disease screening and diagnosis.

Furthermore, AI has also demonstrated significant commonalities and good results in detailed analysis and decision support for disorders in various systems of pediatric US. First, DL models, including CNN, theYOLO series, and U-Netare, are the core technology in image classification, real-time diagnostics, and feature extraction. These models are widely applied in systems, such as intussusception navigation, pneumonia classification, 3D spinal reconstruction, and renal hydronephrosis segmentation. Second, multimodal data integration significantly enhances diagnostic accuracy. For example, in the digestive system this approach integrates US features with clinical biomarkers to assess appendicitis severity and in the respiratory system LUS video and blood oxygenation parameters are combined to predict respiratory support requirements for preterm infants, enabling cross-modal decision-making. Finally, the implementation of quantitative analysis and standardized workflows reduces subjective variability. The renal system, for example, achieves objective grading through the automated calculation of hydronephrosis indices. In contrast, the musculoskeletal system integrates individual parameters, such as infant gender and weight, to refine hip development assessments. Such methods lay the foundation for cross-system quantitative modeling. In the future, these technologies will advance the development of pediatric US for intelligent and precise analysis of cross-system diseases and decision support.

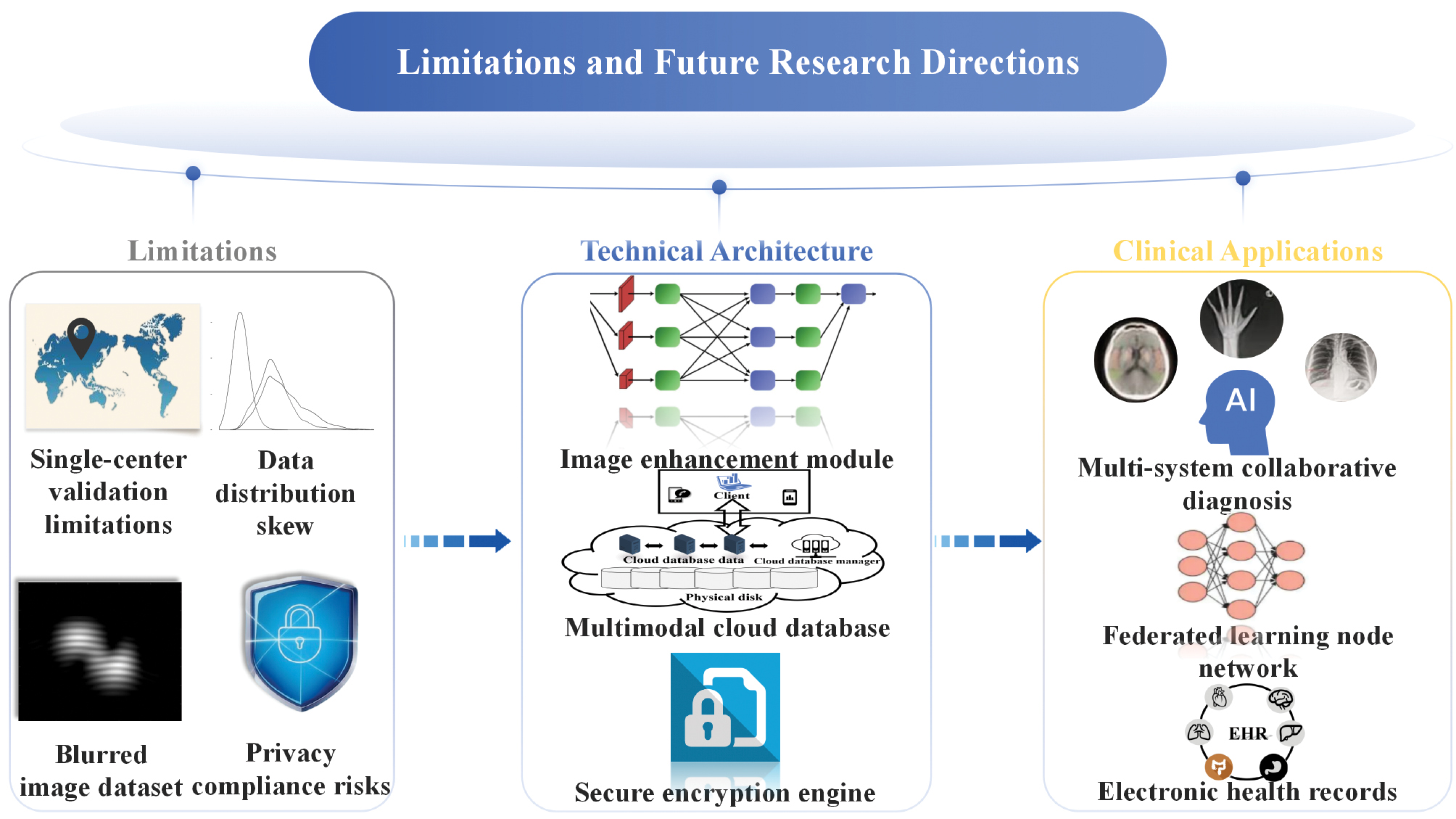

Limitations and challenges

The application of AI in pediatric US has been expanding from basic diagnosis to precise analysis and decision support across various pediatric systems. However, there are some unmet challenges. First, there are issues related to the quality of US image datasets. Some training, validation, and test sets used in AI research contain blurry or incomplete US images, which directly affect the accuracy of the study results [57, 114]. Second, external validation of algorithms faces various challenges. Algorithms validated and tested in single-center studies using specific US devices may underperform when applied in clinical diagnostics across regions or with multimodal US systems due to variations in image quality, US device parameters, and pediatric patient cooperation and compliance [111]. In addition, model generalizability and clinical applicability are limited by the current lack of multi-center system validation, imaging differences across various devices, and anatomic heterogeneity among different pediatric age groups [132]. Third, some studies utilize small sample sizes. Datasets with limited sample sizes introduce a risk of small sample size bias in AI models [8, 93]. Fourth, AI models may experience data distribution shifts. While models trained and validated on high-quality datasets are more likely to succeed, many studies rely on smaller or more commonly available datasets, which can introduce biases and reduce the external applicability of AI models [77, 133]. Finally, large-scale data sharing inevitably raises concerns about data privacy and ethical issues [134]. It is important to note that US imaging exhibits significant variability due to operator dependency and motion artifacts. This variability makes it unlikely that AI will fully replace human experts in the short term. Instead, AI should serve as a clinical decision support tool. By providing real-time annotation of anatomic structures, automatic identification of subtle lesions, and quantitative analysis, AI enhances diagnostic efficiency and consistency. The role of AI is therefore to augment, not replace, physician judgment [135] (Table 3).

Table 3 Limitations of AI in Pediatric Ultrasound

| Aspects of Clinical Practice | Limitations | Results | Potential Solutions |

|---|---|---|---|

| Dataset quality assurance | Inclusion of blurred or incomplete ultrasound images in AI datasets | Compromised model accuracy and reliability | Implement AI-driven image quality screening and standardized curation protocols |

| Algorithm generalizability | Variability in image quality, ultrasound device parameters, and pediatric patient compliance | Suboptimal performance in cross-regional diagnostics and multi-modal systems | Establish multicenter validation cohorts with standardized imaging protocols |

| Data collection variability | Variability in data collection and storage | Nonstandard data collection | Standardize data collection and storage methods |

| Model bias and generalization | Insufficient, non-diverse, or unevenly distributed training data | Model bias, poor generalization | Conduct multicenter studies, expand sample size |

| Ethical and legal concerns | Black box nature of AI | Distrust, legal risk, impact on clinical application | Enhance transparency, obtain informed consent |

Prospects for the future

The current studies indicate that AI can detect and segment anatomic structures in ultrasound images, such as lesion shape, enabling the automated quantification of US biomarkers and aiding in diagnostic support. AI-enhanced US can grade the severity of diseases, thereby assisting clinical decision-making. Moreover, there is a technical commonality in the application of AI for disease diagnosis, including DL models, dynamic image parsing, multimodal data fusion, and interpretable algorithms. Therefore, AI is expected to improve US diagnosis of multi-system diseases in pediatric patients significantly. With the ongoing development of technologies as well as ethical and legal frameworks, AI-enhanced pediatric US will be more widespread in clinical practice, especially in low-resource areas. By saving time, cost, and manpower with less dependent on the expertise of radiologists, AI-enhanced pediatric US will improve the equality in medical service.